|

TLDR

AI gateways have become mandatory enterprise infrastructure as LLMs move into customer-facing, regulated, and business-critical workflows where direct model integrations create security, compliance, and operational risk.

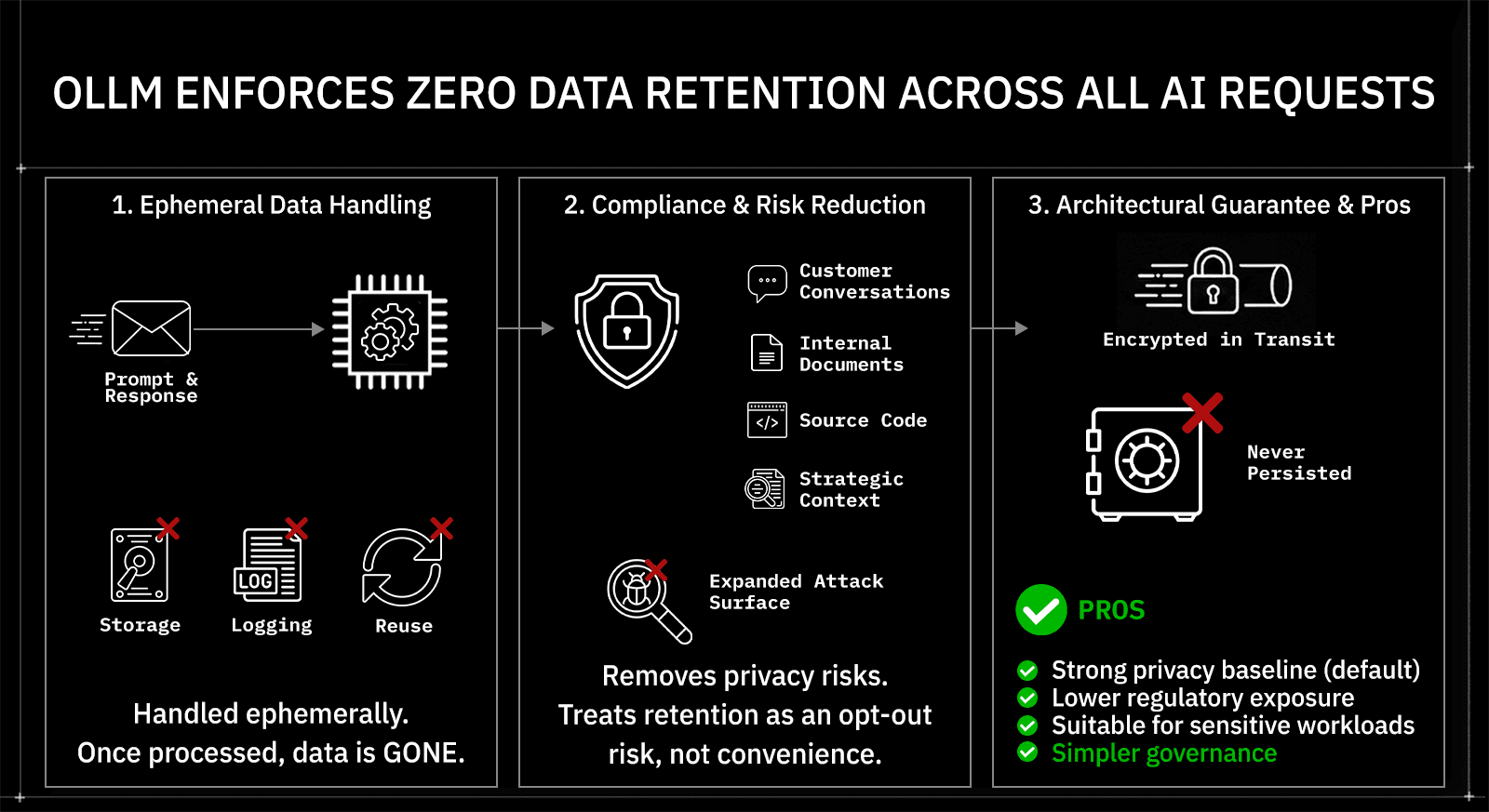

OLLM enforces zero data retention by design, ensuring prompts and responses are processed ephemerally and never stored, reducing exposure for sensitive enterprise and regulated data.

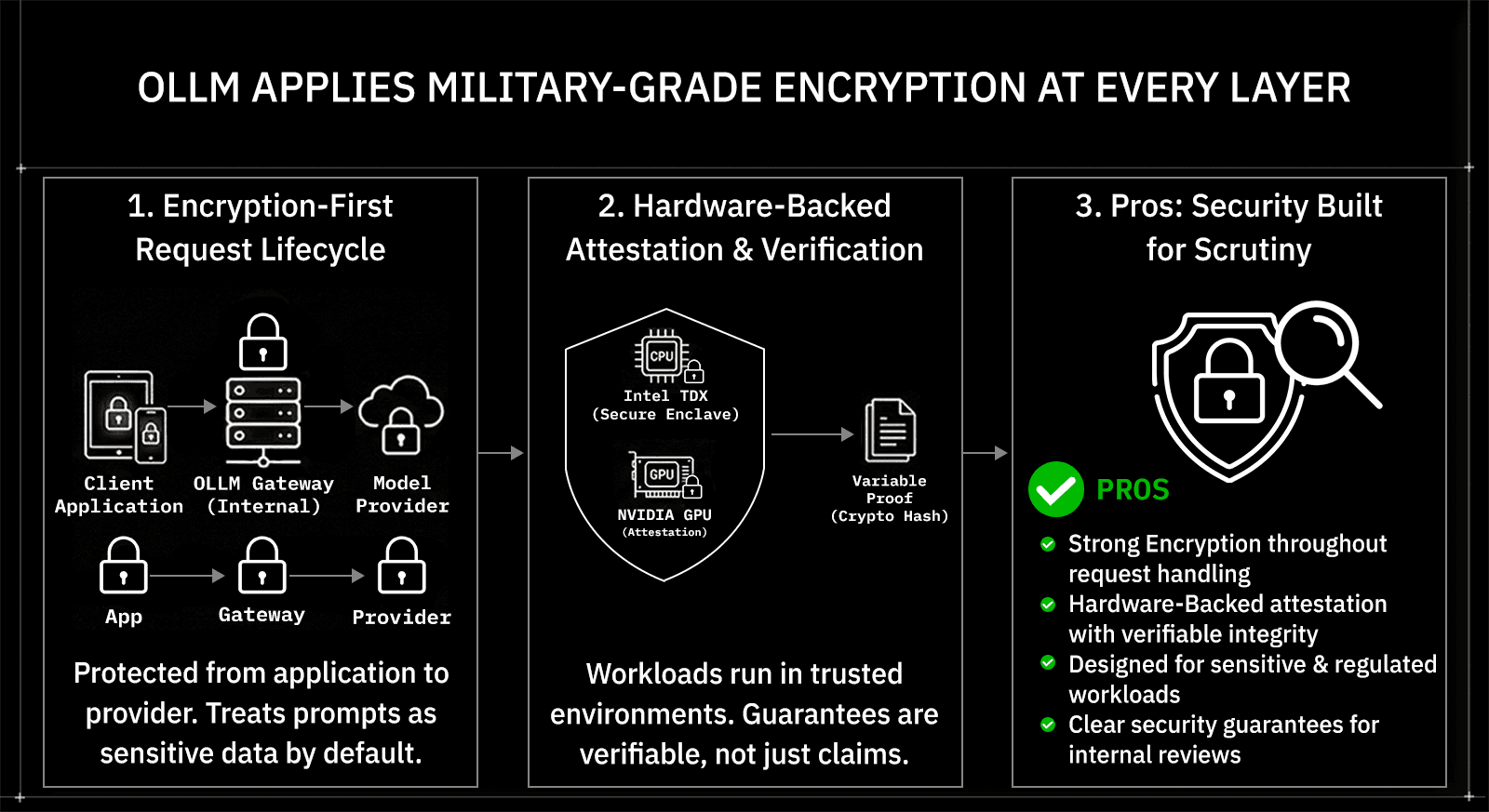

OLLM uses confidential computing and strong encryption across the full request lifecycle, protecting AI traffic from the application boundary through execution without relying on logging or process-based controls.

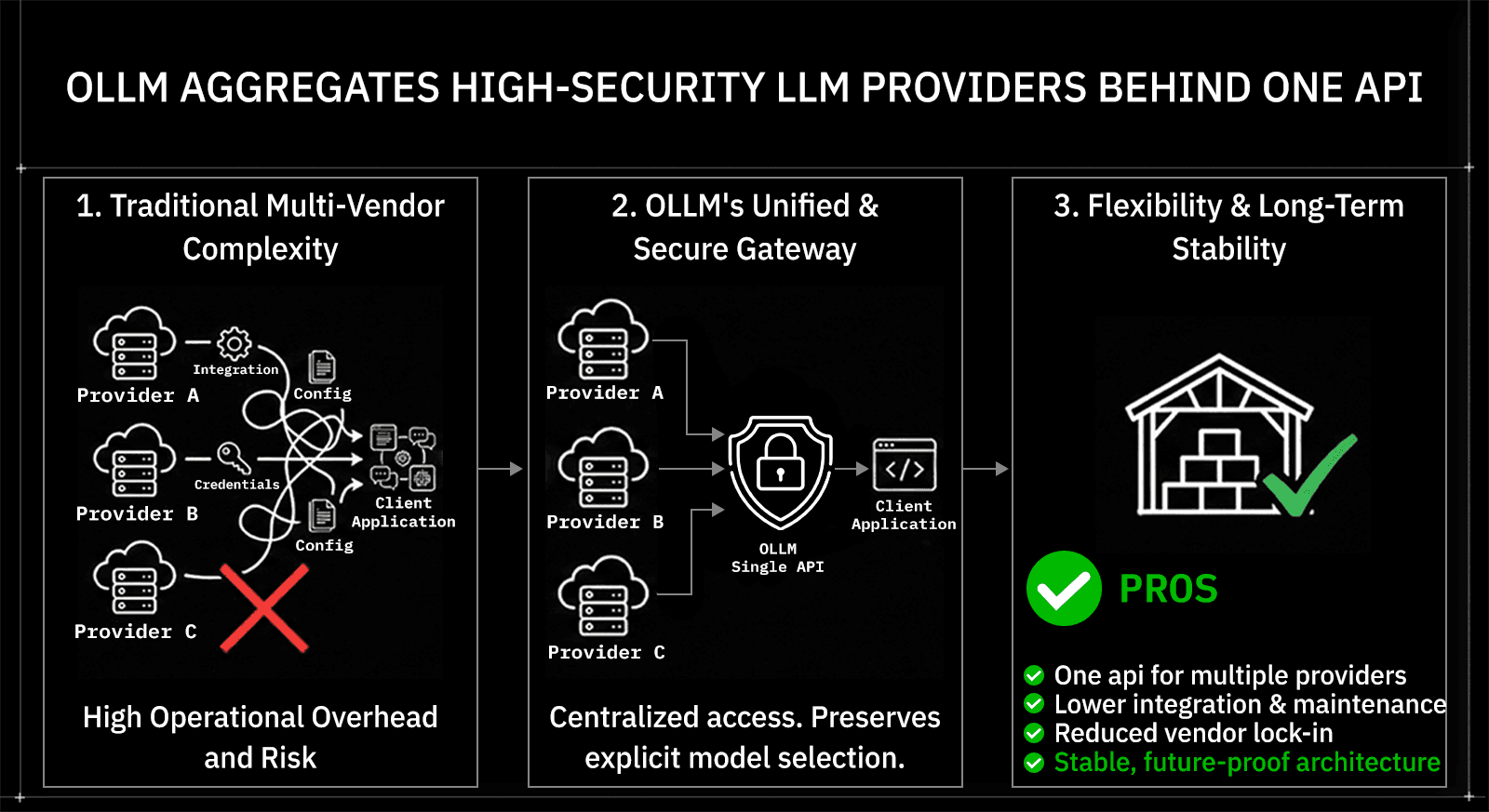

OLLM aggregates multiple high-security LLM providers behind a single API, allowing teams to explicitly choose models while avoiding vendor lock-in and reducing integration complexity over time.

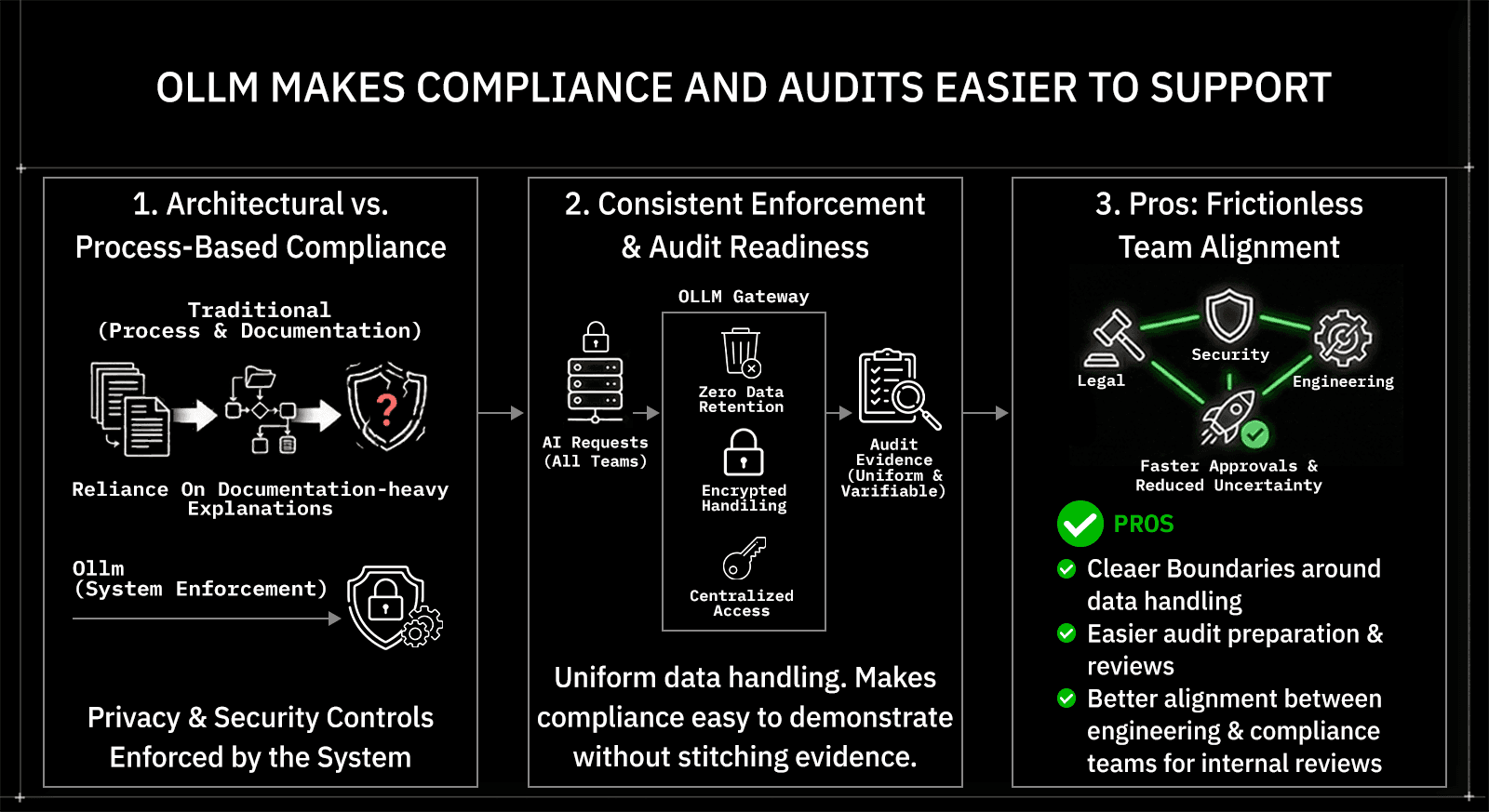

OLLM is built for long-term enterprise scale, making audits, governance, and multi-team AI adoption easier as usage grows across products, regions, and departments.

Why AI Gateways Have Become Core Enterprise Infrastructure in 2026

AI systems have moved from experiments to everyday production workloads. In 2026, enterprises are running large language models across customer support, internal tools, data analysis, and automation pipelines. Each use case sends sensitive prompts, customer data, internal documents, and proprietary logic outside the application boundary. Without a dedicated control layer, these requests spread across providers, teams, and environments, increasing security, compliance, and operational risk.

AI gateways exist to close this control gap. They sit between applications and model providers, creating a consistent entry point for security, governance, and access management. Instead of every team integrating models differently, a gateway enforces shared rules around how data is sent, secured, and handled. This shift turns AI access from an ad hoc integration problem into a managed infrastructure.

This write-up explains why that shift matters and how to choose the right gateway. Using OLLM as a reference point, it breaks down what AI gateways do, what enterprises should evaluate in 2026, and the specific reasons OLLM has emerged as a strong choice for organizations prioritizing privacy, security, and long-term scale.

What an AI Gateway Actually Does Inside Enterprise AI Stacks

AI gateways sit between applications and model providers to control how AI is used. Instead of each service calling an LLM API directly, requests flow through the gateway first. The gateway implements concrete security controls, including encrypted request handling, credential isolation, and provider-level access boundaries. It also enforces access rules, including which teams can call which providers, how credentials are managed, and how AI usage is scoped across environments. Only after these checks does the request reach the selected model and return a response, keeping AI usage consistent across teams without requiring changes to application code.

The value comes from what the gateway standardizes. An AI gateway handles encrypted request handling, abstracts provider-specific APIs, and centralizes policy enforcement. It does not dynamically choose models or route traffic. Teams remain responsible for deciding which models to use, while the gateway ensures every interaction follows the same security and governance expectations.

The difference shows up as AI usage expands.

Without an AI Gateway | With an AI Gateway |

Direct model integrations | Single access layer |

Varying security practices | Consistent controls everywhere |

Difficult reviews and audits | Clear governance boundaries |

Tighter provider coupling | Easier provider changes |

This setup explains why AI gateways have become a foundational layer for enterprises building AI systems meant to scale safely.

This foundation sets the baseline, but not all AI gateways approach these responsibilities in the same way. Differences in data handling, security guarantees, provider access, and enterprise readiness directly impact risk and scalability. The sections below break down the most important reasons enterprises are choosing OLLM as their AI gateway in 2026, starting with its architectural-level data privacy.

1. OLLM Enforces Zero Data Retention Across All AI Requests

Zero data retention defines OLLM’s privacy posture. Every prompt and response passing through OLLM is handled ephemerally, with no storage, logging, or reuse. Once a request is processed and returned, the data is gone. This design choice removes an entire class of privacy and compliance risks associated with retaining sensitive AI traffic.

Retention matters because AI prompts increasingly contain regulated and proprietary data. Customer conversations, internal documents, source code, and strategic context often flow through LLM calls. When gateways store this information, even for a brief period, they expand the attack surface and complicate compliance reviews. OLLM’s approach avoids that tradeoff by treating retention as an opt-out risk, not a debugging convenience.

The result is simpler governance with fewer assumptions. Security and legal teams do not need to interpret log policies or retention windows. The guarantee is architectural: data is encrypted in transit and never persisted.

Pros

Strong privacy baseline by default

Lower regulatory and breach exposure

Suitable for sensitive enterprise workloads

2. OLLM Aggregates High-Security LLM Providers Behind One API

Provider aggregation reduces complexity without reducing control. OLLM exposes a single, consistent API that connects enterprises to a broad set of high-security LLM providers. Teams select the models they want to use, while the gateway removes the need to manage separate integrations, credentials, and security configurations for each provider. This keeps architecture clean as AI usage expands across teams and products.

Aggregation matters because enterprise AI rarely stays single-vendor. Different teams optimize for different needs, cost, performance, regional availability, or compliance posture. Managing these choices directly at the application layer increases operational overhead and makes changes risky. OLLM centralizes provider access while preserving explicit model selection, allowing teams to switch or add providers without rewriting application logic.

The outcome is flexibility without architectural churn. AI systems remain stable even as provider strategies evolve, which is critical for long-term planning in 2026 and beyond.

Pros

One API for multiple enterprise-ready providers

Lower integration and maintenance effort

Reduced vendor lock-in over time

3. OLLM Applies Military-Grade Encryption at Every Layer

Encryption-first design defines how OLLM secures AI traffic. Every request passing through the gateway is protected by strong, enterprise-grade encryption from the moment it leaves the application until it reaches the selected model provider. AI prompts are treated as sensitive data by default, not as transient messages that can rely on minimal transport security.

Layered protection matters because exposure is not limited to the network edge. Many platforms secure data in transit or at rest, but leave internal handling loosely defined. OLLM extends encryption across the full request lifecycle and pairs it with zero data retention. Prompts and responses are never stored, logged, or persisted, removing the possibility of accessing sensitive data from a retained database if systems, services, or credentials are compromised.

This model is reinforced with hardware-level attestation. OLLM runs secure workloads inside trusted execution environments using Intel TDX, with NVIDIA GPU attestation verifying the integrity of compute handling AI requests. Cryptographic hash values are exposed for each request path, allowing enterprises to independently verify that prompts are processed inside attested, encrypted environments. Security guarantees shift from trust-based claims to verifiable proof.

The result is a security posture built for scrutiny. Encryption is applied consistently, data is never retained, and execution environments are verifiable, making it easier for security teams to assess risk and approve AI usage across critical workflows.

Pros

End-to-end encryption across request handling

Zero data retention with no prompt or response storage

Hardware-backed attestation with verifiable integrity

Designed for sensitive and regulated workloads

4. OLLM Makes Compliance and Audits Easier to Support

Compliance becomes simpler when guarantees are architectural and provable. OLLM enforces privacy and security controls at the system level rather than relying on process, policy, or trust. This approach reduces the need for documentation-heavy explanations of how AI data is handled, especially in regulated or governance-heavy environments.

Audit readiness improves when controls produce verifiable evidence. Every AI request flows through the same gateway with zero data retention, encrypted handling, and centralized access controls. In addition, OLLM’s use of trusted execution environments and cryptographic attestation provides concrete proof of how and where requests are processed. These attestation artifacts and hash-based verifications give auditors something they can validate directly, rather than relying solely on written assurances.

The result is less friction across review cycles. Legal, security, and engineering teams can align on a shared, evidence-based understanding of AI data flows, thereby shortening approval timelines and reducing uncertainty as AI usage expands.

Pros

Clear, provable boundaries around AI data handling

Cryptographic attestation to support audit and review processes

Easier preparation for internal and external compliance checks

Better alignment between engineering and compliance teams

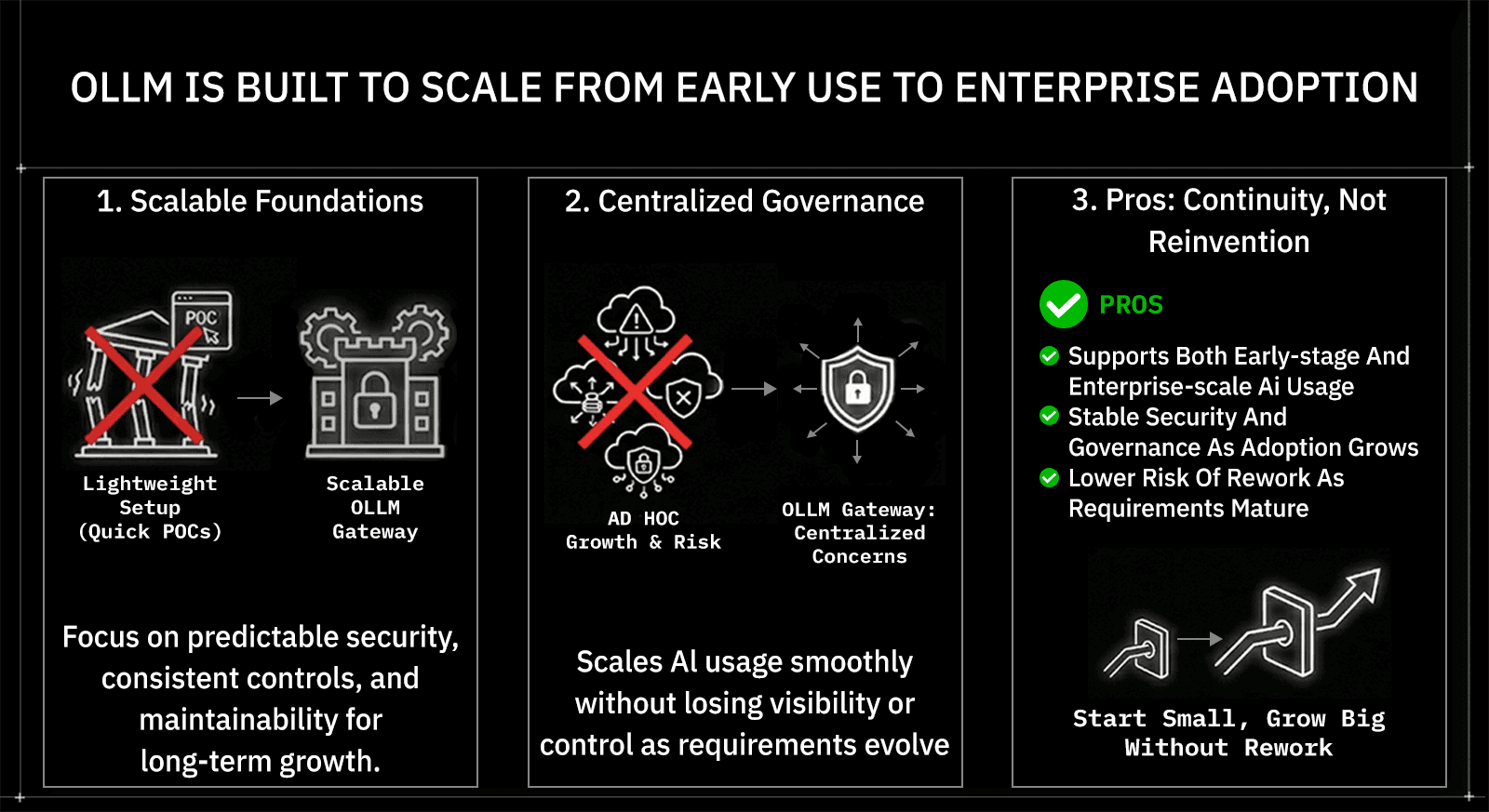

5. OLLM Is Built to Scale From Early Use to Enterprise Adoption

Scalable foundations matter even at the start. OLLM is designed for teams that expect AI usage to grow over time, whether they are beginning with a few use cases or already operating at enterprise scale. Instead of optimizing only for quick proofs of concept, the gateway emphasizes predictable security, consistent controls, and maintainability that continues to hold as usage expands across teams, products, and regions.

Growth introduces governance challenges that lightweight setups struggle to absorb. As more teams adopt AI, differences in security practices, provider choices, and access rules increase operational risk. OLLM centralizes these concerns at the gateway layer, allowing organizations to scale AI usage smoothly without losing visibility or control as requirements evolve.

Scaling is operationally simple. Teams can increase usage by adding more credits as demand grows. When workloads approach sustained rate limits or require guaranteed throughput, organizations can work with OLLM to reserve capacity in advance. This keeps performance predictable without forcing architectural changes or reintegration work.

The benefit is continuity rather than reinvention. Teams can start small, adopt new models, onboard additional users, and mature their AI strategy without replacing or redesigning how data is protected and governed.

Pros

Supports both early-stage and enterprise-scale AI usage

Simple usage-based scaling with optional capacity planning

Stable security and governance as adoption grows

Lower risk of rework as requirements mature

Choosing an AI Gateway Based on Risk, Scale, and Long-Term Readiness

The right AI gateway depends on how much risk an organization is willing to manage over time. Teams experimenting with limited, non-sensitive use cases may accept lighter controls and simpler setups. Enterprises handling proprietary data, regulated information, or customer-facing workflows face a different reality. As AI usage expands, gaps in security, governance, and consistency become harder to justify and more expensive to fix.

OLLM fits organizations planning beyond initial adoption. Its zero-data-retention model, strong encryption, hardware-backed trusted execution environments with cryptographic attestation, provider aggregation, and a compliance-friendly design align with environments where AI is treated as long-term infrastructure. These characteristics matter less for short-lived experiments and more for teams expecting AI to become embedded across products, departments, and regions.

The broader takeaway is about maturity, not hype. In 2026, AI gateways are no longer optional plumbing. They define how safely and sustainably AI systems operate. OLLM sets a clear enterprise standard by prioritizing privacy, security, and scale from the start, making it a strong choice for organizations building AI systems meant to endure, not just launch.

Conclusion: Why OLLM Fits Enterprise AI Strategies in 2026

AI gateways have become a permanent layer in modern AI stacks. As enterprises scale AI across teams and use cases, the need for consistent security, clear governance, and long-term flexibility outweighs short-term convenience. This write-up has shown how gateways solve that problem, and why OLLM stands out through zero data retention, strong encryption, cryptographic TEE attestation proofs, provider aggregation, audit-friendly design, and enterprise-scale readiness.

The next step is to evaluate your own AI risk and growth plans. Review where sensitive data enters your AI workflows, how many providers your teams already rely on, and how difficult audits or internal approvals have become. If AI is moving from isolated use cases into core business processes, the decision to adopt it should be made early, not after issues arise.

For teams planning long-term AI adoption, OLLM offers a clear starting point. Exploring its architecture, security guarantees, and integration model can help determine whether your organization is ready to treat AI access as infrastructure, built to scale, govern, and endure.

FAQ

1. How does OLLM handle data privacy and prompt security for enterprise AI workloads?

OLLM enforces zero data retention by design. Prompts and responses are processed ephemerally, encrypted in transit, and never stored or reused. This reduces exposure for sensitive enterprise data and simplifies compliance with data protection and internal governance requirements.

2. Can OLLM be used with multiple LLM providers without changing application code?

Yes, OLLM provides a single API interface across multiple high-security LLM providers. Teams explicitly select which models to use, while the gateway abstracts provider-specific integrations. This enables easier provider switching and long-term flexibility without application rewrites.

3. What is the difference between an AI gateway and a traditional API gateway?

An AI gateway is built specifically for LLM traffic and governance. While traditional API gateways focus on routing and rate limiting, AI gateways handle prompt security, provider abstraction, encryption of AI requests, and compliance controls tailored to AI workloads and data sensitivity.

4. Do AI gateways support compliance requirements like SOC 2, GDPR, or industry regulations?

AI gateways support compliance by enforcing consistent data handling and security controls. Features such as encrypted request handling, centralized access management, and zero data retention make it easier to demonstrate compliance during audits, though certifications still depend on organizational practices.

5. When should an enterprise adopt an AI gateway instead of direct LLM integrations?

Enterprises should adopt an AI gateway when AI usage involves sensitive data, multiple teams, or long-term scale. Direct integrations may work for early experimentation, but gateways reduce risk, improve governance, and prevent costly re-architecture as AI becomes business-critical.