|

TLDR

AI gateways centralize how applications access AI models, replacing fragmented direct integrations with a single control layer for security, routing, and governance.

Direct LLM API usage does not scale safely, especially when multiple teams, models, and sensitive data are involved.

AI gateways enforce consistent security and privacy guarantees, including encryption, access control, auditability, and policy enforcement across all AI traffic.

Enterprise AI gateways reduce vendor lock-in, allowing teams to switch or add models without rewriting application logic.

Platforms like OLLM provide production-ready AI infrastructure that combines zero data retention, hardware-backed execution, and verifiable attestation for privacy-critical workloads.

What Are AI Gateways?

AI gateways are infrastructure layers that manage how applications access and use AI models. Instead of letting every service connect directly to a large language model provider, an AI gateway becomes the single entry point for all AI requests. Applications send prompts to the gateway, which decides where those requests go and how they are handled.

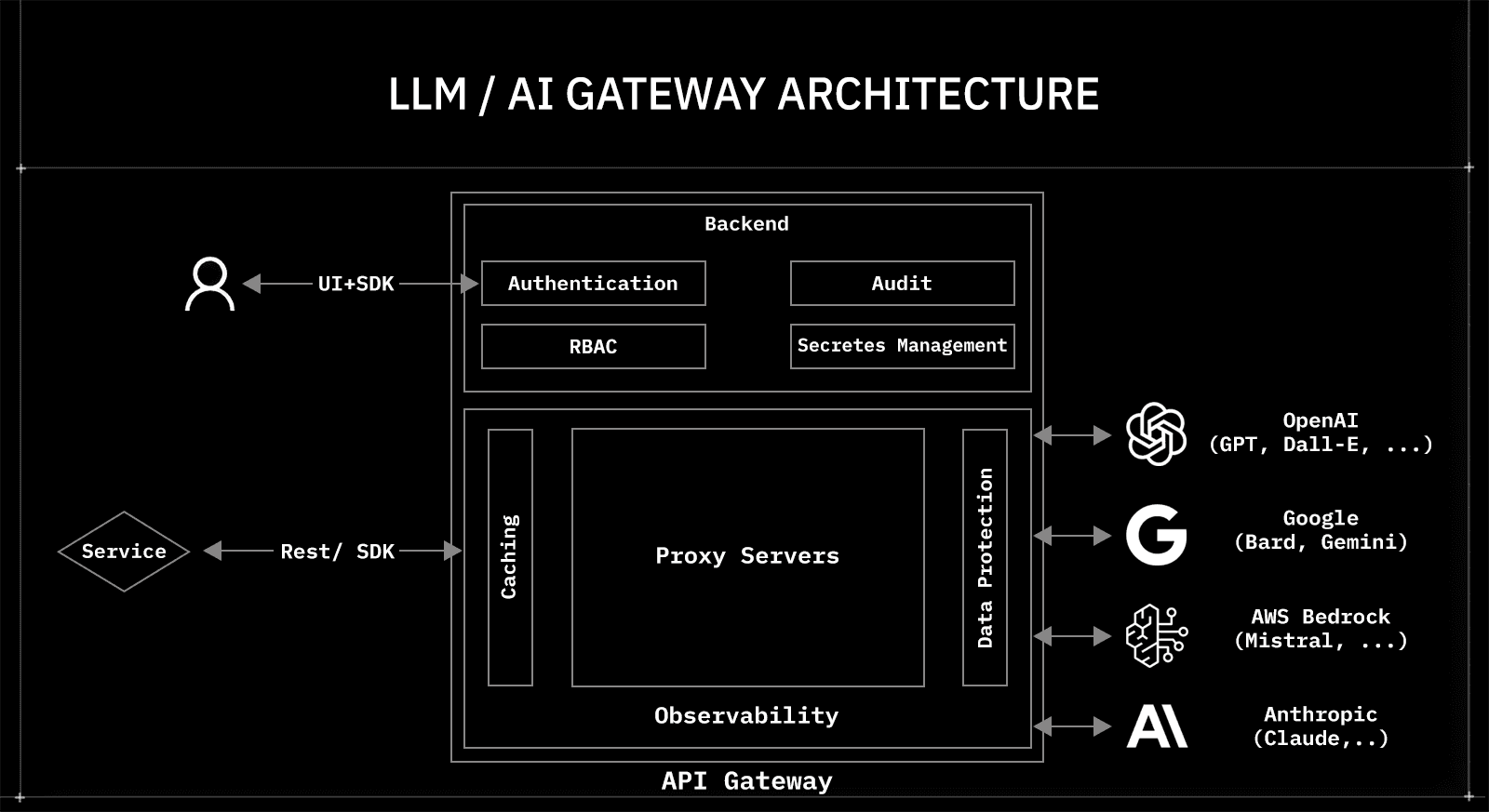

At a basic level, an AI gateway sits between three things:

Applications that need AI capabilities

One or more LLM providers or inference backends

Enterprise policies around security, privacy, and usage

This placement matters. Without a gateway, each application embeds its own logic for authentication, provider selection, logging, and data handling. Over time, this creates inconsistent behavior and hidden risk. With a gateway, those concerns are consolidated into a single shared layer that can be managed and audited centrally.

In practice, AI gateways handle several core functions that direct integrations cannot:

Request routing: selecting models based on policy, cost, or performance

Security enforcement: managing credentials, encryption, and access control

Usage visibility: capturing logs and metrics across all AI traffic

Policy consistency: applying the same rules to every team and workload

This is why AI gateways are increasingly treated as part of the core AI stack, not as optional tooling. They turn AI usage from scattered API calls into a governed, production-ready system.

Where AI Gateways Fit in an AI System Architecture

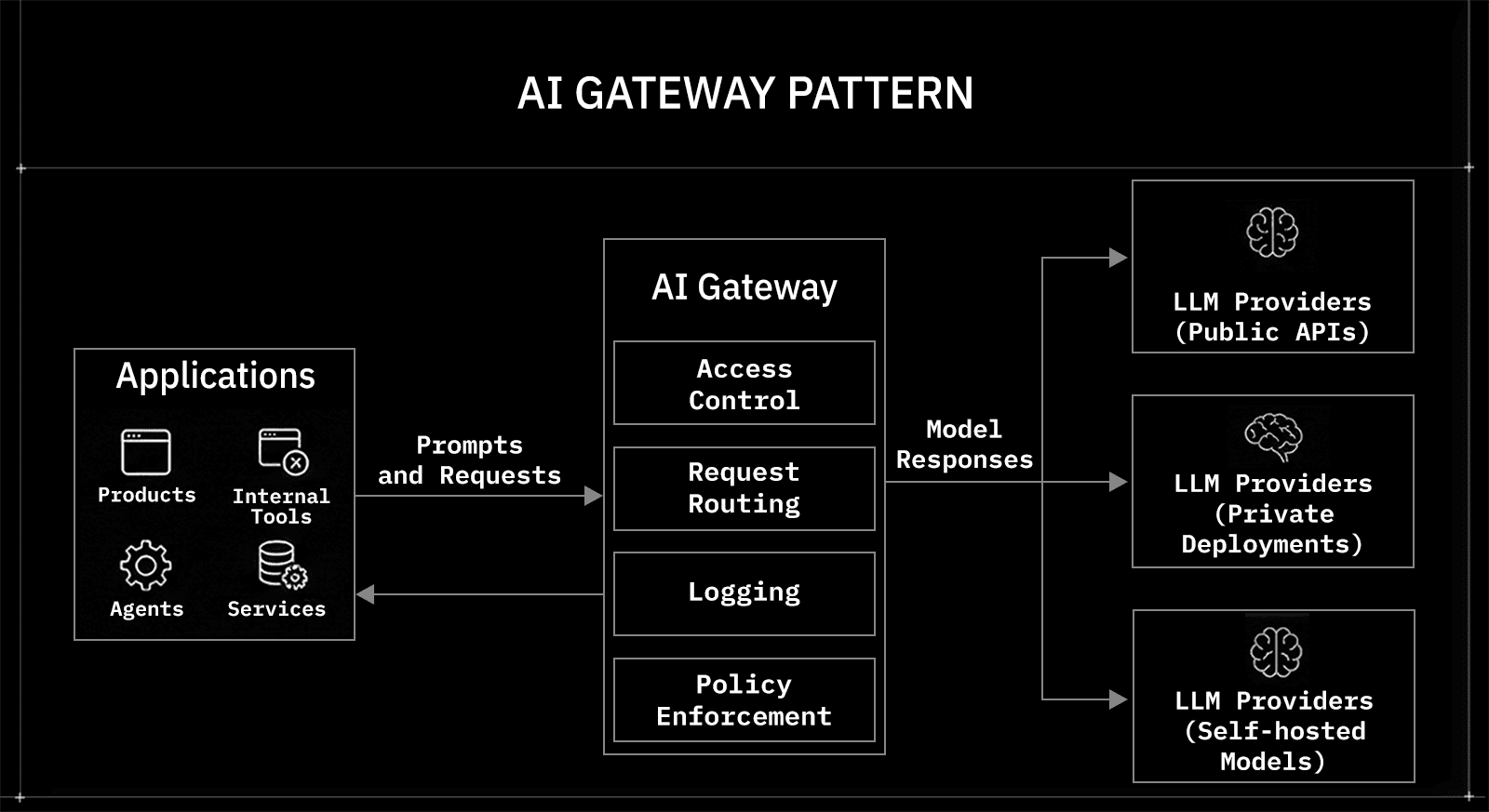

AI gateways sit between applications and AI model providers to control how requests flow. Applications send prompts and receive responses via the gateway rather than calling models directly. This gives teams a single place to manage routing, security, and usage policies.

In a typical setup, the architecture looks like this:

Applications: products, internal tools, agents, and services using AI

AI gateway: access control, request routing, logging, and policy enforcement

LLM providers: public APIs, private deployments, or self-hosted models

This structure separates responsibilities cleanly. Application teams focus on business logic. Model providers focus on inference and performance. The gateway enforces organizational rules across both. Without this layer, each application implements its own authentication, provider selection, and data-handling logic, leading to inconsistent and hard-to-audit code.

By centralizing these concerns, AI gateways turn fragmented integrations into a controlled system that can scale across teams and use cases.

What an AI Gateway Is Responsible For

AI gateways centralize responsibilities that would otherwise be duplicated across applications. When teams integrate models directly, each service ends up making its own decisions about routing, security, logging, and data handling. An AI gateway consolidates those decisions into a single shared layer.

In practice, a production-grade AI gateway is responsible for a small set of critical functions:

Request routing and abstraction: forwarding prompts to the right model based on policy, availability, or performance, without hard-coding providers into applications

Access and credential management: handling API keys, secrets, and authentication so applications never manage provider credentials directly

Security enforcement: applying encryption, isolation, and access controls consistently across all AI traffic

Visibility and auditability: capturing logs, metadata, and usage metrics in one place

These responsibilities are tightly coupled. Routing without security creates risk. Logging without policy enforcement creates noise. The value of an AI gateway lies in owning them all as a single control surface. This is what turns AI usage from scattered integrations into infrastructure that can be trusted at scale.

Why Security and Privacy Push Teams Toward AI Gateways

Security breaks first when AI usage grows without a gateway. Direct integrations send prompts and data straight from applications to external models, often with limited visibility into how that data is handled, stored, or logged. As more teams ship AI features, this creates blind spots that security and compliance teams cannot easily close.

AI gateways address this by enforcing security and privacy at a single choke point. Instead of trusting every application to “do the right thing,” the gateway applies the same guarantees to all traffic. Encryption can be enforced consistently. Access controls become uniform. Sensitive data handling stops depending on individual developer decisions.

This matters most in environments handling proprietary or regulated data. Logs, prompts, and responses need clear boundaries. Retention policies need to be provable. Audit trails need to be built in by design, not as an afterthought. AI gateways make these requirements enforceable at the infrastructure level, which is why security and privacy concerns are now the strongest drivers of adoption.

When an AI Gateway Becomes a Hard Requirement

AI gateways stop being optional once AI moves from isolated features to shared infrastructure. Early experiments can tolerate direct model calls. Production systems cannot. The shift usually happens quietly, then all at once.

Common signals show up first in operations and security:

Multiple models in use: different teams rely on different providers for cost, latency, or capability

Sensitive data in prompts: internal documents, customer records, or proprietary code flow through models

AI shared across teams: support, analytics, engineering, and ops reuse the same AI capabilities

Compliance pressure: audits, vendor reviews, or regulatory requirements enter the picture

At this stage, direct integrations create friction. Every new model requires duplicate reviews. Every security update requires coordinated changes across services. Visibility remains partial.

An AI gateway resolves this by creating a single enforcement point. Policies apply everywhere by default. Providers can change without rewriting applications. As AI becomes embedded across the organization, the gateway becomes the layer that keeps the system manageable rather than brittle.

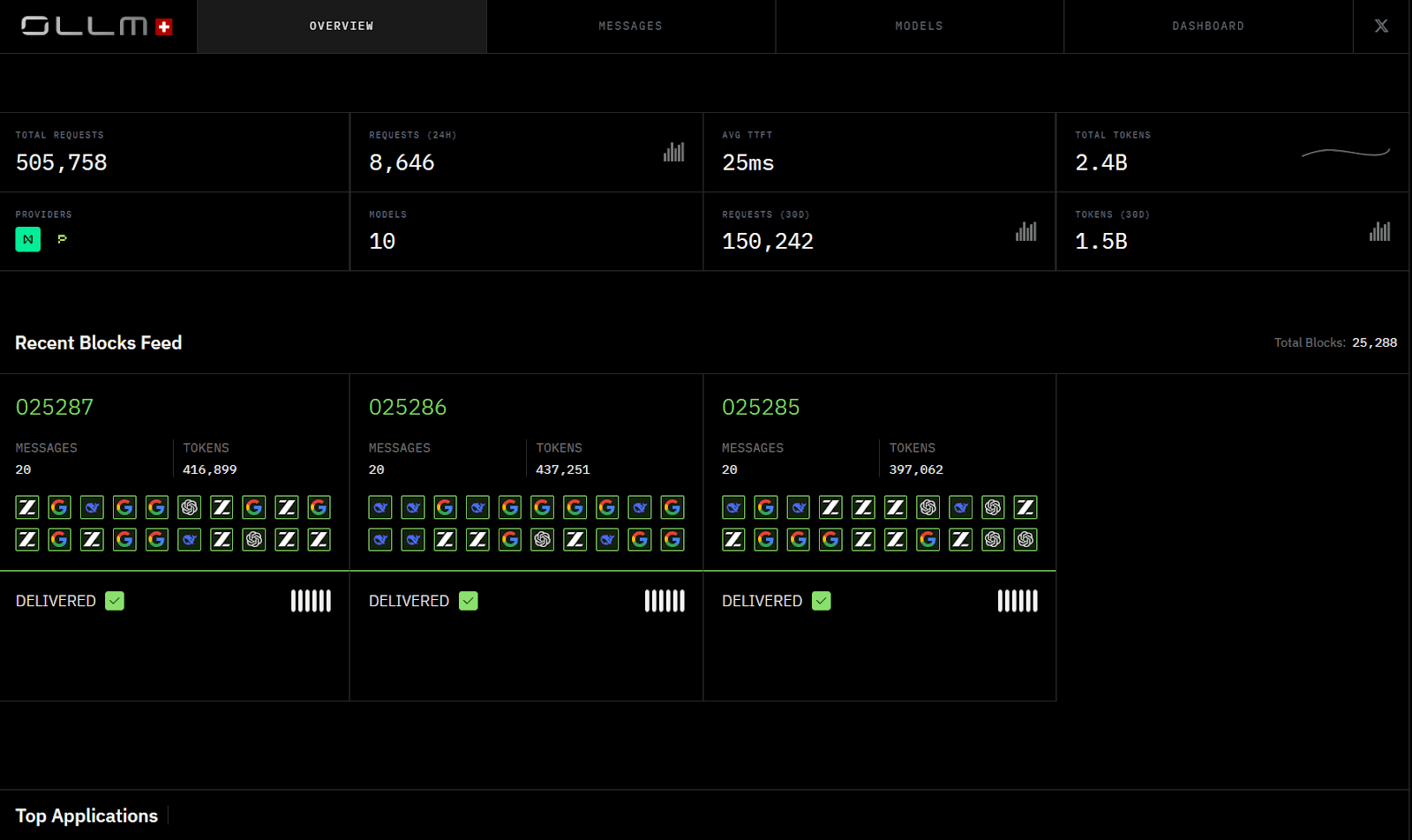

OLLM: An Enterprise AI Gateway Built for Verifiable Privacy

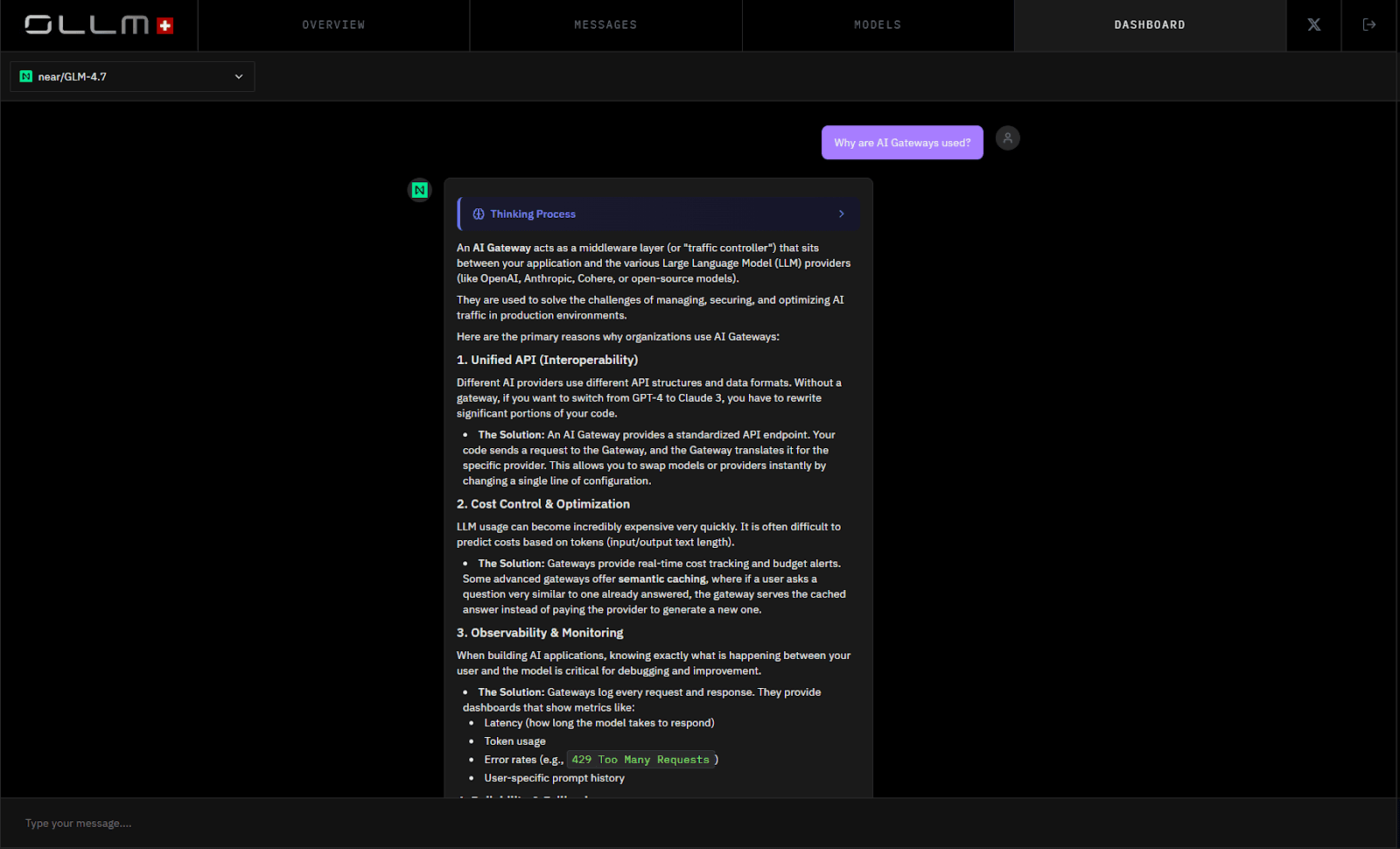

OLLM is an enterprise-focused AI gateway designed to route AI traffic through high-security LLM providers with strict zero-retention guarantees. Instead of exposing applications directly to external models, OLLM acts as a secure routing layer that enforces encryption, isolation, and governance by default.

At its core, OLLM aggregates dozens of AI models behind a single, OpenAI-compatible API. Applications integrate once and route all AI requests through OLLM. The gateway handles provider access, request forwarding, and security controls without requiring teams to embed provider credentials or model-specific logic into their applications. This keeps AI architecture stable as usage scales across teams.

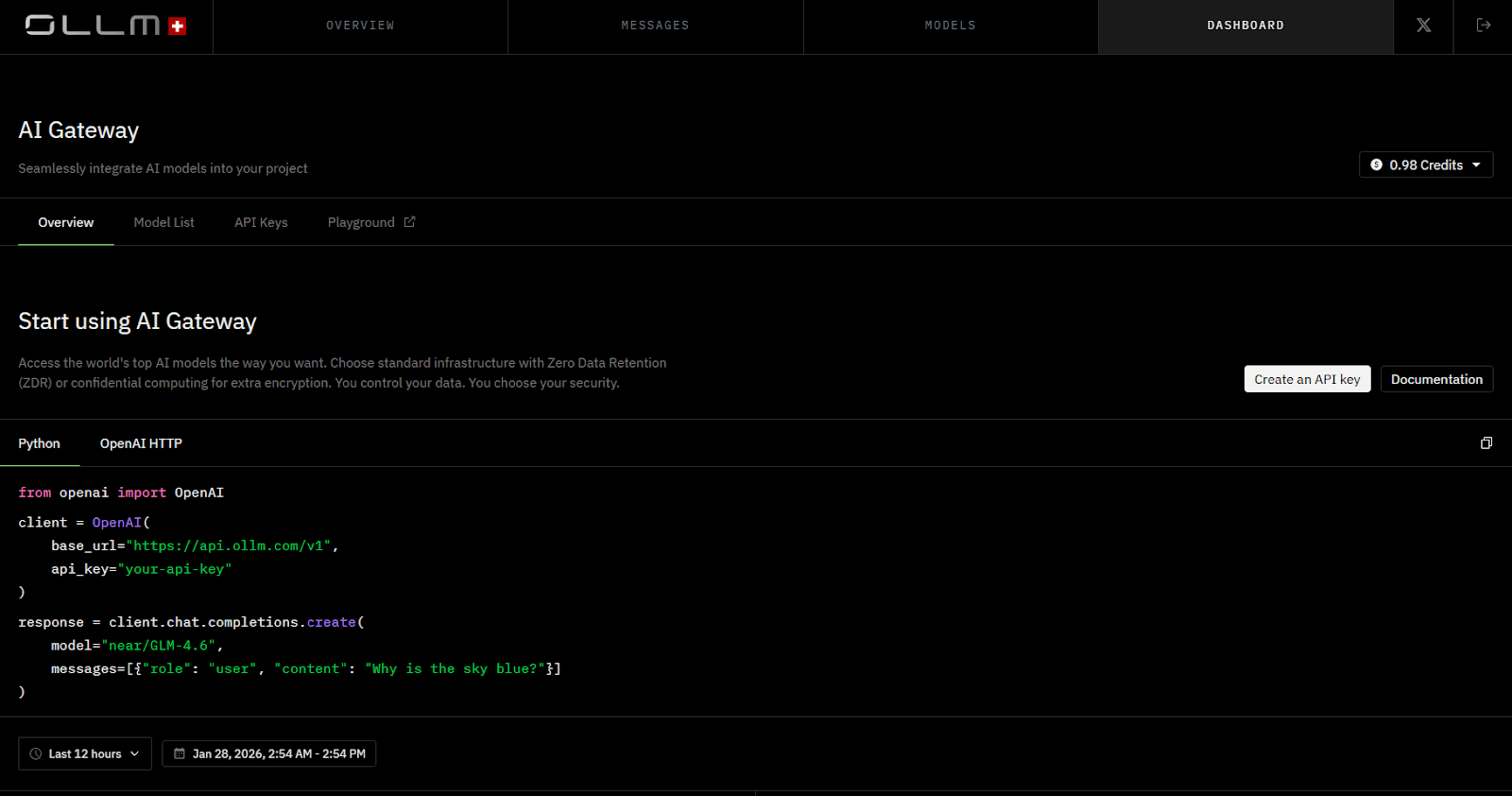

Minimal Integration: OpenAI-Compatible by Design

OLLM is designed to be drop-in compatible with existing OpenAI-based applications. In most cases, adopting OLLM does not require rewriting prompts, changing SDKs, or modifying application logic. Teams simply update the API endpoint and replace their existing API key with an OLLM-issued key.

Because OLLM follows the OpenAI API format, existing tools, frameworks, and SDKs continue to work as-is. This enables the introduction of enterprise-grade security, zero data retention, and verifiable execution without disrupting developer workflows.

In practical terms, onboarding looks like this:

Change the API base URL to point to OLLM

Replace the API key with an OLLM-managed key

Keep the same request format, models, and client libraries

This approach lets teams adopt an AI gateway incrementally. Applications keep working. Security and governance improve immediately. No refactoring is required.

OLLM’s security model is built around verifiable execution and data handling:

Zero data retention, ensuring prompts and responses are not stored

Trusted Execution Environments (TEEs) for all model inference

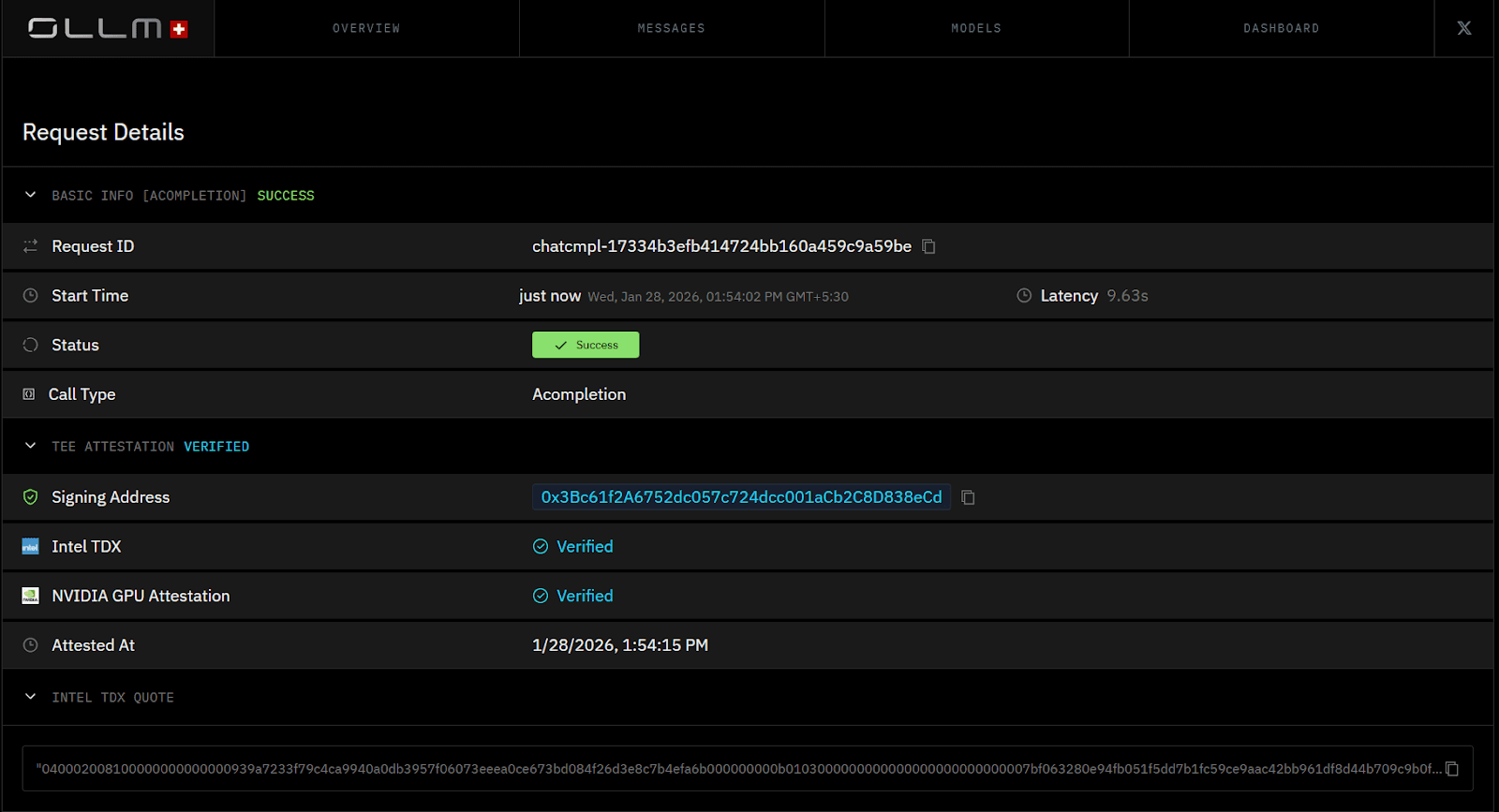

Cryptographic attestation using Intel TDX and NVIDIA GPU attestation, which customers can independently verify

Strong isolation guarantees, preventing data leakage across providers and workloads

Strengths

Designed for production and security-sensitive environments

Verifiable privacy through hardware-backed execution

Access to many models through a single, stable API

This design positions OLLM as infrastructure rather than tooling. Privacy, security, and verification are treated as first-class requirements, enforced at the execution layer rather than left to policy or trust alone.

How AI Gateways Reduce Vendor Lock-In While Increasing Control

AI gateways decouple applications from specific AI providers. Without a gateway, model choices are baked directly into application code. Switching providers means refactoring logic, updating credentials, and revalidating security assumptions across services. That friction quietly locks teams into early decisions.

A gateway removes this coupling by acting as an abstraction layer. Applications send requests to a stable interface. The gateway decides which model handles the request. Providers can change underneath without forcing changes upstream. This keeps architecture flexible as models, pricing, and capabilities evolve.

This control shows up in a few practical ways:

Provider portability: move between models based on cost, latency, or performance

Policy-driven routing: enforce rules without touching application code

Centralized negotiation leverage: avoid dependency on a single vendor

Future-proof architecture: adapt as the AI ecosystem changes

By separating application logic from provider decisions, AI gateways let teams adopt new models quickly while keeping operational control firmly centralized.

Why AI Gateways Are Becoming Core Infrastructure

AI gateways are no longer an optimization layer; they are becoming part of the base infrastructure for AI systems. As AI usage expands across teams and workflows, organizations need consistent controls that do not depend on individual applications or developers making the right choices.

The pattern mirrors earlier infrastructure shifts. APIs needed gateways once traffic, security, and governance became shared concerns. AI is following the same path. Models change quickly. Providers evolve. Regulatory expectations tighten. What stays constant is the need for a stable control layer that enforces how AI is accessed and used.

In practice, this means:

Security and privacy are enforced once, not reimplemented everywhere

Provider flexibility without architectural churn

Auditability and compliance are built into the system by default

As AI becomes embedded in core products and operations, gateways move from “nice to have” to foundational. They turn AI from a collection of external dependencies into a system that can be governed, trusted, and scaled over time.

Conclusion: AI Gateways Turn AI Usage Into Trustworthy Infrastructure

AI gateways exist to solve the structural problems that appear when AI moves into production. They centralize access, enforce security and privacy, and give organizations control over how models are used across teams and systems. Instead of fragmented integrations and implicit trust, gateways introduce a clear control layer that makes AI auditable, governable, and scalable. As AI becomes embedded in core products and operations, this layer stops being optional and starts defining whether AI systems can be trusted long term.

Teams building with sensitive data or operating at scale should evaluate AI gateways early. If privacy, verification, and provider flexibility matter, platforms like OLLM are designed specifically for that reality. With zero data retention, hardware-backed execution, and verifiable attestation, OLLM enables AI adoption without compromising control. Exploring an AI gateway is no longer about optimization; it is about setting the right foundation before AI becomes impossible to unwind.

FAQ

1. What is the difference between an AI gateway and direct LLM API integration?

An AI gateway adds a control layer between applications and LLM providers. While direct LLM API integration connects services straight to a model, an AI gateway centralizes security, access control, logging, and governance. This makes AI usage auditable, provider-agnostic, and easier to manage at scale.

2. Do AI gateways slow down LLM inference or increase latency?

Modern AI gateways are designed to minimize overhead. In most cases, the latency added by a gateway is negligible compared to network and inference time. The trade-off favors consistency, security, and visibility, especially for production workloads that use multiple AI models.

3. How does OLLM handle data privacy and zero data retention?

OLLM enforces zero data retention by design. Prompts and responses are not stored, logged, or reused. All model execution occurs within Trusted Execution Environments (TEEs), with cryptographic attestation via Intel TDX and NVIDIA GPU attestation that customers can independently verify.

4. Can OLLM be used with multiple AI models through a single API?

Yes. OLLM aggregates dozens of AI models behind a single OpenAI-compatible API. Applications integrate once and gain access to multiple providers without embedding model-specific logic or credentials. This simplifies AI architecture and reduces long-term vendor lock-in.

5. When should an enterprise adopt an AI gateway?

An AI gateway becomes necessary once AI is used across teams, involves sensitive data, or relies on multiple model providers. Organizations facing security reviews, compliance requirements, or scaling AI workloads typically adopt an AI gateway to enforce consistent policies and maintain architectural control.