|

TD;LR

Secure enclaves protect AI data while it is being processed. They use CPU-level isolation to keep prompts, context, and intermediate inference data inaccessible to operating systems, cloud infrastructure, and administrators, closing the long-standing gap around data-in-use security.

AI inference creates security risks that encryption alone cannot solve. Large language models require plaintext data in memory, which exposes sensitive enterprise information to memory inspection, logging, and privileged access in shared or managed environments.

Zero-knowledge AI becomes possible with hardware-backed isolation. Secure enclaves ensure that sensitive data is decrypted and processed only inside protected execution boundaries, with remote attestation enabling enterprises to verify that trusted code is running securely.

Software-only AI security breaks down at enterprise scale. Without hardware isolation, enterprises cannot reliably prevent runtime exposure, insider access, or audit gaps when deploying AI systems in production.

Ollm applies secure enclaves as the foundation of enterprise AI execution. By combining hardware-backed isolation, end-to-end encryption, and a single API for hundreds of LLMs, Ollm enables zero-knowledge AI inference without expanding trust boundaries as AI usage scales.

What are Secure Enclaves?

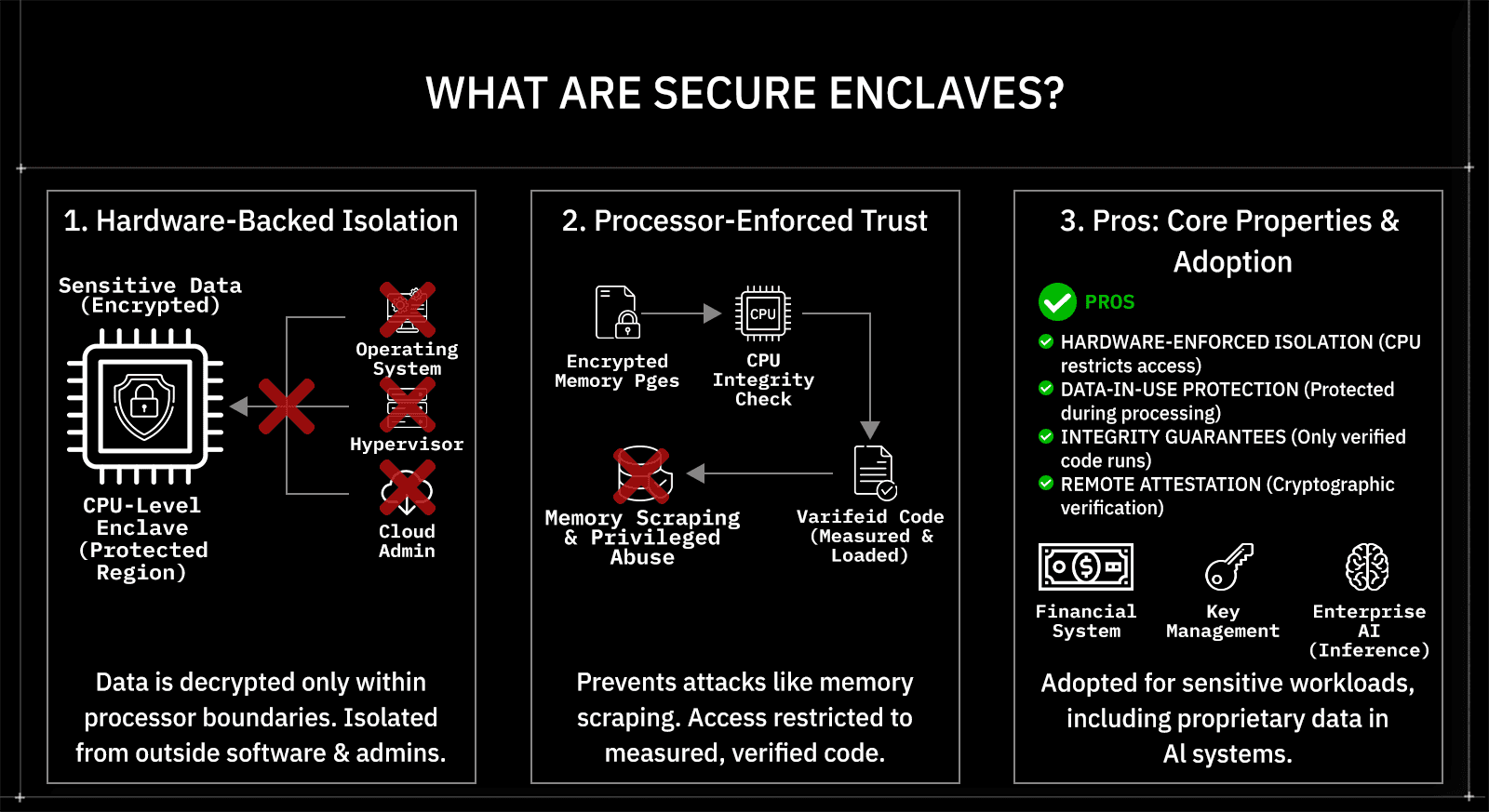

Secure enclaves are hardware-backed execution environments that keep code and data isolated while computation is actively happening. They are implemented at the CPU level and create a protected region of memory in which sensitive data is decrypted only within the processor's boundaries. Anything running outside the enclave, including the operating system, hypervisor, firmware, and cloud administrators, cannot read or modify the data inside it. This guarantees protection for data in use, not just data stored on disk or moving across the network.

Technically, secure enclaves change how trust is enforced during execution. Instead of relying on software permissions or infrastructure controls, enclaves rely on processor-enforced isolation. Memory pages belonging to the enclave are encrypted and integrity-checked by the CPU, and access is restricted to code that has been explicitly loaded and measured into the enclave. This design prevents common attack vectors such as memory scraping, privileged access abuse, and runtime inspection, even in multi-tenant cloud environments.

Several core properties define how secure enclaves work in practice:

Hardware-enforced isolation: The CPU ensures that only enclave code can access enclave memory.

Data-in-use protection: Sensitive data remains protected while being processed, not only when stored or transmitted.

Integrity guarantees: Only verified code is allowed to run inside the enclave.

Remote attestation: External systems can cryptographically verify that trusted code is running inside a genuine enclave.

Enterprises adopt secure enclaves when workloads must process sensitive data without expanding trust boundaries. Financial systems, cryptographic key management, and confidential computing workloads already rely on enclaves for this reason. As AI systems increasingly handle proprietary data, regulated information, and internal context during inference, the same hardware-backed isolation model becomes directly applicable to enterprise AI security.

Why AI workloads expose new security gaps

AI workloads introduce security risks that traditional enterprise controls were not designed to handle. Large language models process prompts, context, and intermediate results in plaintext during inference. This data often includes proprietary source code, internal documents, customer information, or regulated data. While encryption protects data at rest and in transit, it provides no protection once the model begins computation and the data is loaded into memory.

Inference expands the attack surface beyond what most security models assume. Prompts and responses may pass through shared infrastructure, third-party runtimes, or managed services before a result is returned. Memory, logs, debugging tools, and privileged system access are potential points of exposure. Even well-secured environments struggle to provide strong guarantees once data leaves the application boundary and enters model execution.

Several characteristics of modern AI systems make these gaps more pronounced:

Plaintext processing: Models require readable data to generate outputs.

Shared execution environments: Many LLM services run on multi-tenant infrastructure.

Extended data lifetimes: Prompts and context may persist in memory longer than expected.

Operational visibility: Logging and monitoring tools can unintentionally capture sensitive data.

As AI moves from experimentation to production, these gaps become unacceptable. Enterprises deploying internal copilots, customer-facing AI, or decision-support systems need stronger assurances that sensitive data is not exposed during inference. This shift is driving demand for security mechanisms that protect data while it is actively being processed, rather than relying solely on perimeter or transport-layer controls.

How secure enclaves enable zero-knowledge AI

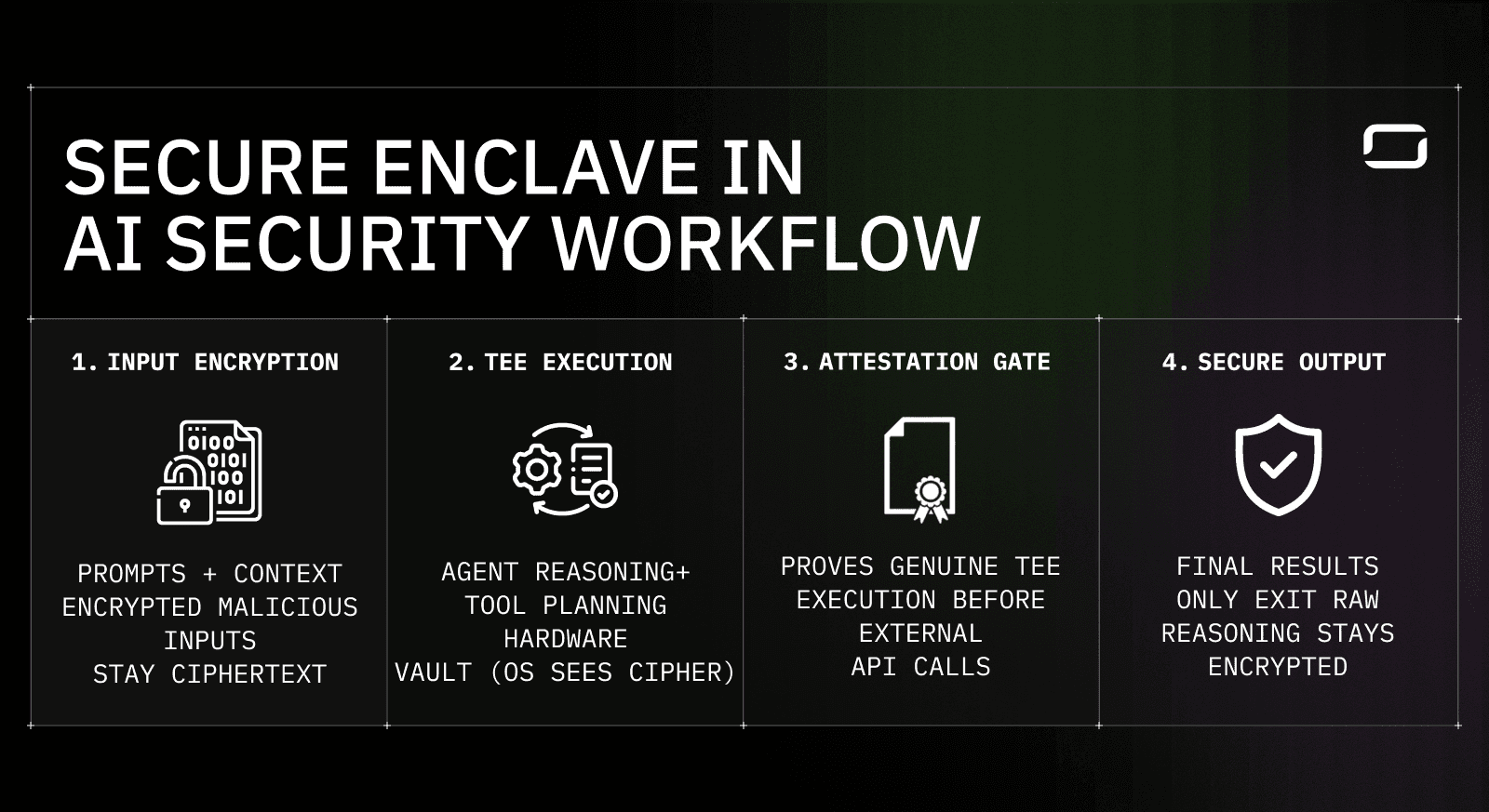

Secure enclave–based AI inference flow showing encrypted inputs, TEE execution, cryptographic attestation, and controlled output.

Zero-knowledge AI is enforced by controlling how data moves through the inference lifecycle. Secure enclaves ensure that sensitive data remains protected before execution, during inference, and after results are produced. The four stages below map directly to the workflow shown in the diagram.

1. Input encryption keeps prompts and context protected

Prompts, context, and sensitive inputs enter the system in encrypted form.

Until execution begins inside a trusted execution environment (TEE), this data remains ciphertext.

The operating system, host infrastructure, and surrounding services never see plaintext inputs.

What this prevents: early inspection, logging leakage, and pre-execution exposure.

2. TEE execution isolates reasoning and intermediate state

Decryption happens only inside the secure enclave.

Model reasoning, intermediate states, and tool-planning logic execute entirely within hardware-isolated memory.

The CPU enforces isolation, preventing OS- or hypervisor-level access to enclave memory.

What this prevents: memory scraping, privileged access abuse, and runtime inspection.

3. Cryptographic attestation verifies trusted execution

Before external API calls or downstream interactions occur, the enclave produces TEE attestation proofs.

These proofs confirm that approved code is running inside a genuine hardware-backed enclave.

External systems can verify execution integrity before allowing data exchange.

Why this matters: zero-knowledge guarantees become verifiable, not trust-based.

4. Secure output releases only final results

After inference completes, only the final response exits the enclave.

Intermediate reasoning, internal state, and execution traces remain encrypted.

No sensitive data is exposed through logs, telemetry, or debugging tools.

What this prevents: post-execution leakage and retrospective data access.

Together, these stages ensure that sensitive AI data never leaves hardware-enforced trust boundaries. Secure enclaves make zero-knowledge AI practical by protecting data throughout the entire execution lifecycle, giving enterprises verifiable guarantees during inference without relying on trust in the underlying infrastructure.

Why enterprise AI security breaks without hardware isolation

Enterprise AI security fails when runtime isolation is treated as a software problem. Most AI security controls stop protecting data once encryption ends and inference begins. At that point, prompts, context, and intermediate results exist in plaintext in memory. Software-based permissions, access controls, and monitoring tools cannot reliably prevent privileged access or runtime inspection in shared or managed environments.

Runtime exposure introduces risks that are difficult to mitigate with policy alone. Operating systems, hypervisors, debugging tools, and logging pipelines all have legitimate access paths that can be abused or misconfigured. Insider threats, misaligned privileges, and accidental data capture become realistic failure modes. Even well-governed environments struggle to prove that sensitive data was never accessible during execution.

Common failure points in software-only AI security include:

Memory inspection: Privileged processes can read application memory.

Operational tooling: Logs, traces, and crash dumps may capture sensitive data.

Infrastructure trust: Cloud administrators and service operators remain implicitly trusted parties.

Audit gaps: Inability to prove data isolation during inference.

Hardware isolation closes these gaps by shrinking the trust boundary. Secure enclaves enforce isolation at the processor level, ensuring that sensitive AI workloads remain protected even if surrounding systems are compromised. For enterprise AI, this shift from assumed trust to enforced isolation is critical for deploying models in production with meaningful security guarantees.

How Ollm applies secure enclaves to enterprise AI

Ollm applies secure enclaves as the execution foundation for enterprise AI, not as an optional security add-on. Its architecture is built so that AI requests are processed inside hardware-isolated environments from the moment sensitive data enters the system. Prompts, context, and intermediate inference data are decrypted only within secure enclaves, ensuring protection during runtime rather than relying solely on transport- or storage-level encryption.

This design allows Ollm to enforce zero-knowledge execution across aggregated LLM providers. Even though Ollm routes requests to hundreds of models through a single API, the data required for inference remains isolated from infrastructure, operators, and model providers. The surrounding systems handle routing, policy enforcement, and orchestration, while the enclave boundary ensures that plaintext data is never exposed outside the protected execution environment.

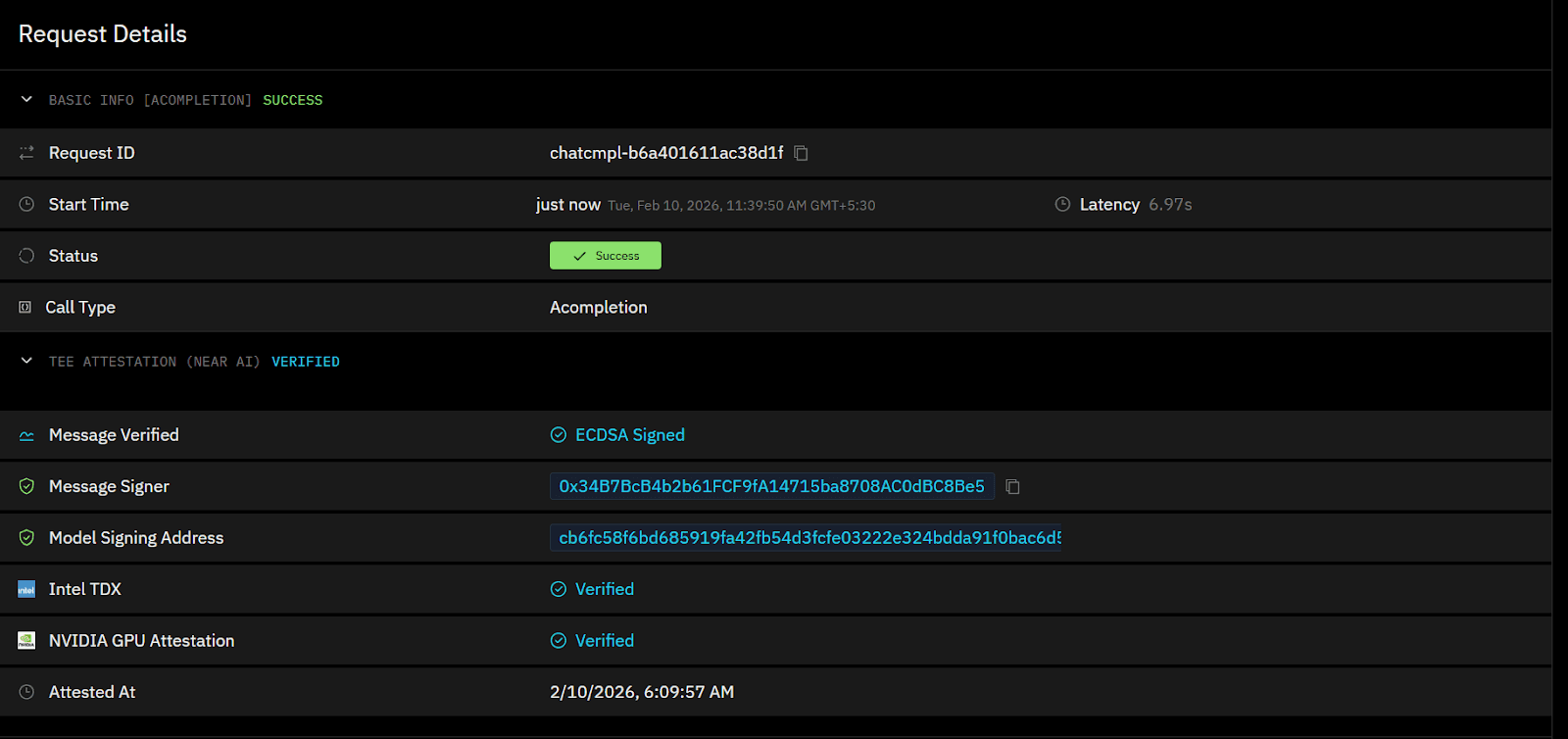

Ollm exposes hardware attestation signals so enterprises can independently verify how their AI requests are executed. Each inference request is backed by cryptographic attestation metadata tied to the underlying secure enclave environment, including CPU-level trusted execution and GPU-level isolation. This allows teams to confirm that requests are processed inside verified hardware-backed enclaves rather than relying on platform assurances alone.

Several architectural principles define how Ollm uses secure enclaves in practice:

Hardware-first isolation: Sensitive AI workloads execute inside CPU-enforced enclave boundaries.

End-to-end encryption: Data is encrypted in transit, encrypted at rest, and protected during execution.

Provider-agnostic execution: Models can be swapped or routed without expanding trust boundaries.

Verifiable privacy: Enclave attestation enables independent verification of the execution environment.

By anchoring its AI gateway in hardware isolation, Ollm reduces trust assumptions across the entire AI stack. Enterprises can adopt multiple LLMs without granting them access to sensitive data, making secure enclaves a core enabler for deploying AI safely in high-risk and regulated environments.

What makes Ollm’s secure enclave approach different

Ollm’s secure enclave approach is defined by how deeply isolation is integrated into the AI execution lifecycle. Secure enclaves are not limited to protecting a narrow function or a single model call. Instead, they are used to isolate sensitive data throughout inference, regardless of which underlying LLM provider is selected. This ensures that privacy guarantees remain consistent even as models, vendors, or routing strategies change.

Ollm enforces zero data retention by design. Prompts, responses, and inference context are not stored after execution, which removes the risk of sensitive data being accessed later through logs, databases, or internal systems. By eliminating persistent storage of AI inputs and outputs, Ollm reduces exposure not just during inference, but also after execution has completed.

Compliance is supported through verifiable TEE attestation proofs, not policy assurances. Ollm provides cryptographic evidence that inference is executed inside approved hardware-backed enclaves. This allows security and compliance teams to validate runtime isolation during audits, rather than relying on contractual claims or documentation alone.

Encryption and isolation are enforced continuously rather than selectively. Requests that enter Ollm encrypted are decrypted only inside hardware-protected enclaves, and are never exposed to external systems in plaintext. Memory, execution state, and intermediate results remain protected against inspection or leakage. This reduces reliance on operational discipline and contractual assurances, replacing them with hardware-backed guarantees.

Several characteristics distinguish this approach at an architectural level:

Isolation at the hardware layer: Security is enforced by the CPU rather than by software policy.

Uniform trust boundaries: All models operate under the same enclave-backed constraints.

Independent verification: Attestation enables enterprises to confirm that approved code is running in a protected environment.

Scalable aggregation: Hundreds of models can be accessed without weakening security guarantees.

Hardware attestation visibility: Each request includes verifiable attestation data for the enclave environment, including CPU-based trusted execution and GPU isolation, allowing customers to confirm that inference ran inside encrypted, hardware-protected boundaries.

For enterprises, this means security properties do not degrade as AI usage scales. As teams adopt new models or expand AI-driven workflows, Ollm maintains consistent data protection without increasing exposure or operational risk.

Ollm is designed to scale with enterprise AI demand without changing its security model. Usage can be increased by adding capacity as workloads grow, while organizations anticipating sustained or high-volume inference can work with Ollm to reserve capacity in advance. This allows teams to scale AI adoption predictably while maintaining the same enclave-backed isolation and zero-knowledge guarantees.

Why secure enclaves are becoming foundational for enterprise AI

Secure enclaves are shifting from a specialized security feature to a foundational requirement for enterprise AI. As organizations move AI systems into production, the data involved increasingly includes core intellectual property, regulated information, and customer data. Traditional security models that rely on perimeter controls, in-transit encryption, or trust in providers are no longer sufficient once inference begins and data is in memory.

Regulatory and compliance pressures are accelerating this shift. Enterprises are expected to demonstrate not only that data is encrypted but also that it is inaccessible to unauthorized parties during processing. Hardware-backed isolation provides a concrete way to prove this. Attestation and verifiable execution enable security teams to validate how AI workloads run, rather than relying on policy statements or contractual assurances.

Enterprise AI adoption is also changing the risk profile of infrastructure. AI systems are becoming embedded in decision-making, automation, and customer interaction flows. Failures or data leaks at this layer have a greater impact than those in earlier experimental deployments. Secure enclaves reduce this risk by narrowing trust boundaries and enforcing isolation at the processor level.

As AI becomes a core enterprise capability, hardware-enforced security is emerging as a baseline expectation. Secure enclaves provide the technical foundation for deploying AI systems with confidence, making them central to the next generation of enterprise AI architectures.

Conclusion

Secure enclaves provide the missing security layer for enterprise AI by protecting sensitive data during execution. Throughout this article, we examined how AI workloads expose data in memory, why software-only controls fail to close that gap, and how hardware-backed isolation enables zero-knowledge AI inference. Secure enclaves shift enterprise AI security from trust-based assumptions to verifiable, processor-enforced guarantees, making them suitable for regulated and high-risk environments.

For enterprises deploying AI beyond experimentation, the next step is architectural, not incremental. Evaluating how AI requests are routed, where inference occurs, and whether data is protected while in use is critical. Platforms like Ollm apply secure enclaves at the core of AI execution, enabling organizations to adopt multiple models without expanding data exposure.

Enterprises exploring production-grade AI should assess whether their current AI stack can provide verifiable runtime isolation. If sensitive data, internal context, or regulated information is involved, secure enclave–based architectures are a practical foundation for moving forward with confidence.

FAQ

1. How do secure enclaves protect AI data during inference?

Secure enclaves protect AI data by isolating prompts, context, and intermediate inference states inside hardware-encrypted memory. Data is decrypted only within the CPU boundary and cannot be accessed by the operating system, hypervisor, or cloud administrators. This provides protection for data in use, closing the gap left by encryption at rest and in transit.

2. What is zero-knowledge AI inference and why does it matter for enterprises?

Zero-knowledge AI inference ensures that sensitive data is never visible to infrastructure providers or model vendors during execution. Enterprises can run LLM inference while preventing third parties from inspecting prompts or outputs. This model is critical for regulated industries and proprietary workloads that require verifiable data isolation.

3. How is Ollm different from traditional AI gateways in terms of security?

Ollm is built around secure enclaves as the execution foundation rather than relying on software isolation. Prompts and inference data are processed inside hardware-protected environments, enabling zero-knowledge execution across multiple LLM providers. This reduces trust assumptions and provides verifiable privacy guarantees that software-only gateways cannot offer. Ollm also exposes hardware attestation metadata, including CPU and GPU enclave verification, so enterprises can independently validate how each inference request was executed.

4. Can Ollm support multiple LLM providers without exposing sensitive data?

Yes. Ollm aggregates hundreds of LLMs behind a single API while maintaining consistent security guarantees. Secure enclaves ensure that sensitive data remains isolated during inference, regardless of which underlying model is used. This allows enterprises to switch or evaluate models without expanding data exposure.

5. Are secure enclaves required for enterprise AI compliance?

Secure enclaves are not always mandatory, but they are increasingly expected for high-risk and regulated AI workloads. Compliance frameworks often require proof that sensitive data is not accessible during processing. Hardware-backed isolation and attestation provide a practical way to meet these requirements as AI systems move into production.