|

TL;DR

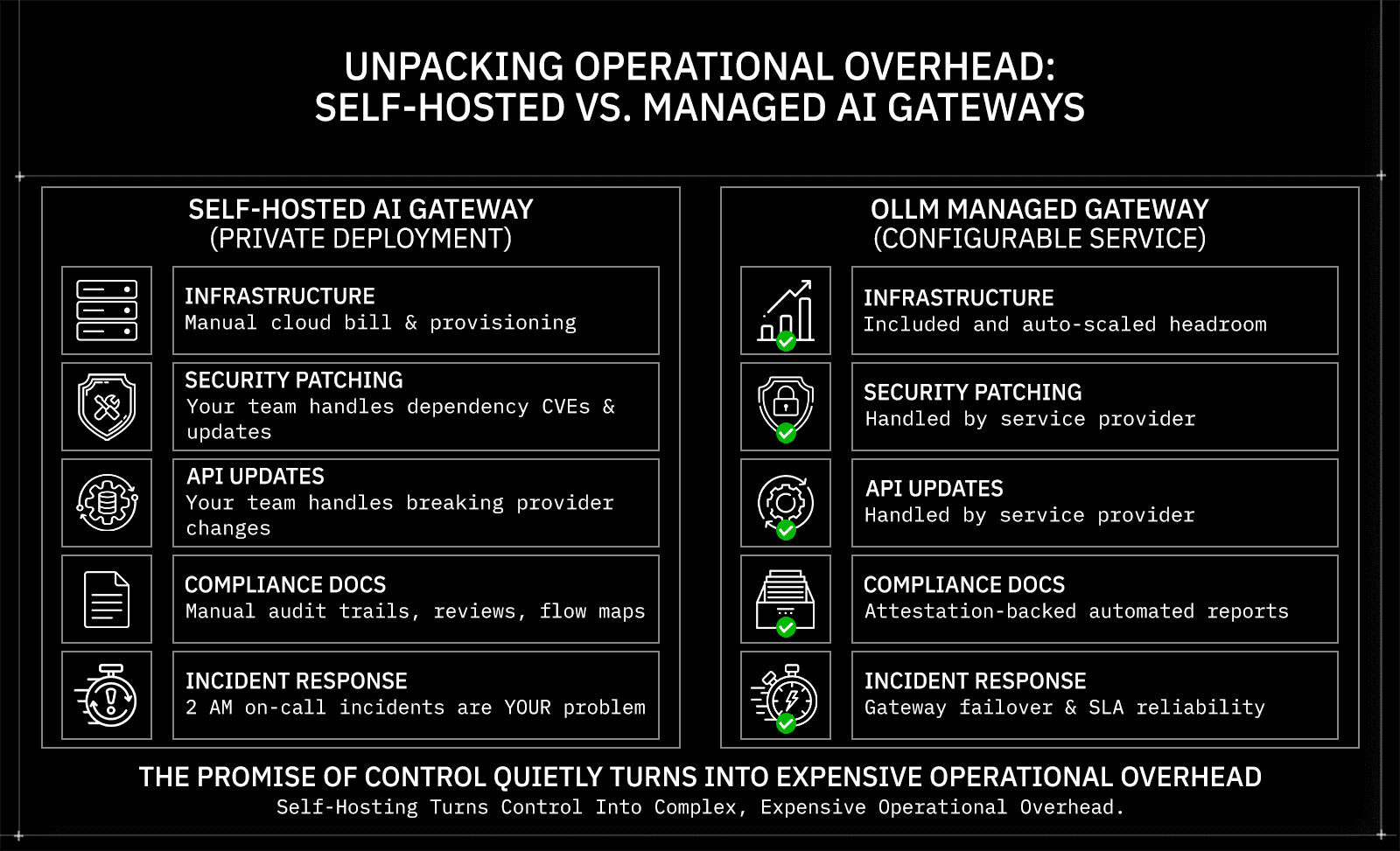

Self-hosted AI gateways carry significant hidden TCO, including engineering maintenance, security patching, compliance documentation, and incident response, which rarely appear in initial cost estimates but consistently dominate the 12-month bill.

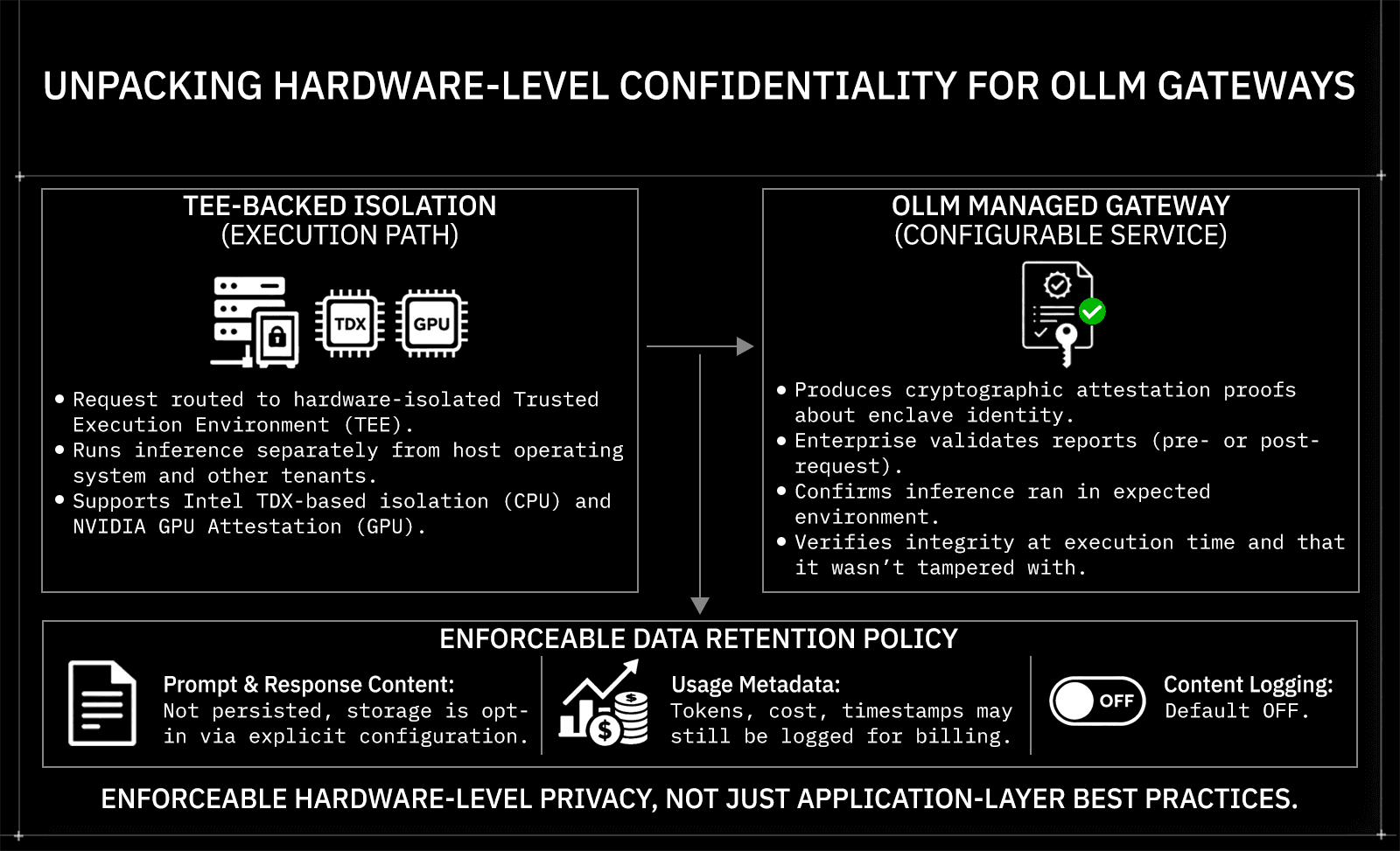

Encryption in transit isn't enough; the real security gaps in self-hosted setups are prompt/response persistence, lack of hardware-level isolation, and the absence of any cryptographically verifiable execution evidence.

TEE-backed inference (Intel TDX + NVIDIA GPU Attestation, where supported) runs workloads in hardware-isolated environments, producing cryptographic attestation evidence about execution integrity, something no self-hosted gateway can replicate at the application layer.

OLLM defaults to not storing prompt and response content at the gateway layer; usage metadata may still be logged, and content logging is opt-in via explicit configuration, making zero-retention an enforceable infrastructure default, not an app-level best practice.

Scaling with a managed confidential gateway is a procurement decision, not an engineering project. Rate-limit-aware routing, load balancing, and failover are handled at the gateway layer, with reserved capacity available for high-volume use cases.

As AI moves deeper into production workflows, the infrastructure layer handling model access has become a serious engineering and security decision. AI gateways, the layer sitting between your application and LLM providers, determine how requests are routed, how costs are tracked, whether sensitive data is retained, and whether any of those guarantees are verifiable. Choosing between self-hosting that layer and using a managed confidential gateway isn't just an infrastructure preference; it's a decision with real compliance, security, and cost consequences.

This piece breaks down the true total cost of ownership on both sides, covering infrastructure, engineering overhead, security depth, hardware-level confidentiality, and scaling, so you can evaluate the trade-off with the full picture in front of you.

Why Teams Self-Host AI Gateways

A self-hosted AI gateway is a privately deployed middleware layer that your team owns, operates, and maintains. It handles routing between your application and LLM providers, enforces rate limits, tracks usage, and manages authentication, all running on infrastructure you control. For teams with strict data residency requirements or those operating under HIPAA, SOC 2, or the EU AI Act, that level of control over the request path is a legitimate architectural requirement.

The gap shows up when you move from planning to production. A self-hosted gateway is not a deploy-and-forget system. When OpenAI ships a breaking change to their API response format, your routing logic needs to be updated before it silently breaks downstream. When a CVE drops against a dependency in your gateway stack, your team owns the patch cycle. When your inference traffic doubles because a new feature ships, someone needs to have already provisioned the headroom. None of that is accounted for in a compute bill. A mid-sized engineering team can easily spend 20 to 40 percent of a senior engineer's annual capacity keeping a self-hosted gateway operational before writing a single line of product code.

In regulated environments, the overhead compounds further. A healthcare platform handling patient-adjacent queries needs more than a correctly configured gateway. It needs documented audit trails, recurring security reviews, and a clear data flow map that can withstand a SOC 2 audit or an EU AI Act compliance assessment. That documentation doesn't generate itself. When something breaks at 2 am, whether a provider endpoint goes down, a rate limit triggers unexpectedly, or a TLS certificate expires mid-request, that is your team's incident to resolve, not a vendor's SLA to enforce.

Cost Category | Self-Hosted | OLLM |

Infrastructure | Your cloud bill | Included |

Security patching | Your team | Handled |

Provider API updates | Your team | Handled |

Compliance documentation | Manual | Attestation-backed |

Incident response | On-call engineering | Gateway-layer failover |

Control is valuable. But unaccounted operational overhead quietly turns a cost-saving decision into one of your most expensive infrastructure choices.

What an AI Gateway Actually Does

AI gateways sit between your application and the underlying LLM providers, handling the operational layer so your application doesn't have to. At the core, that means a unified API across hundreds of models, centralized cost tracking, rate-limit enforcement, and routing logic that keeps inference running even when a provider has issues.

Model selection in a gateway can work a few different ways:

App-driven: the application specifies a model alias explicitly, and the gateway routes to the correct provider and deployment

Policy-driven: the gateway selects among approved deployments based on latency, cost, or availability

Hybrid: the application specifies a model alias, and the gateway handles fallback, load balancing, and rate-limit-aware routing around it

Most self-hosted gateways support some version of this. The difference with a managed confidential gateway like OLLM is what happens underneath that routing layer, specifically, how the inference request is executed, whether prompt/response content is persisted, and whether any of those guarantees are cryptographically verifiable.

Connecting to OLLM works through an OpenAI-style, OpenAI-SDK-compatible API for supported endpoints. The model string tells the gateway both which provider to route to and which model to run, with TEE-backed execution handled automatically for supported paths:

from openai import OpenAI |

cURL

curl https://api.ollm.com/v1/chat/completions -H "Content-Type: application/json" -H "Authorization: Bearer your-api-key" -d '{ |

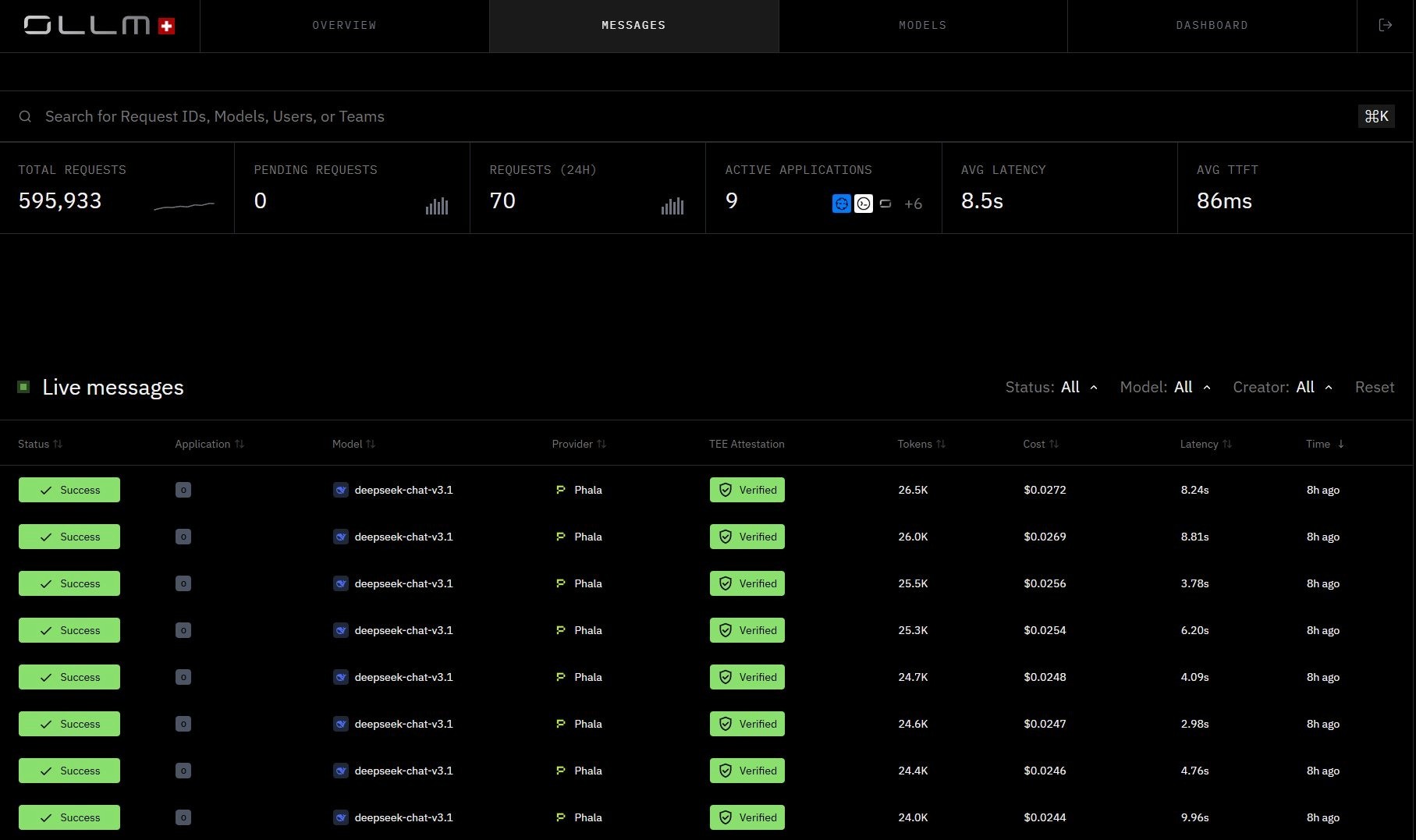

OLLM Messages view, live inference requests with per-request TEE attestation status, cost, latency, and token counts:

Capability | Self-Hosted | OLLM |

Unified API | Yes | Yes |

Multi-mode access | Yes | Yes, hundreds of models across providers via one API |

Failover | Depends on implementation | Available |

Zero-retention defaults | Manual configuration | Configurable by default; prompt/response content not stored unless explicitly enabled |

Hardware-backed execution | Not available | TEE-backed (supported paths) |

Cryptographic attestation | Possible on supported hardware (H100/H200), but requires custom implementation and maintenance | Built-in; Intel TDX + NVIDIA GPU Attestation included by default |

The Real Total Cost of Ownership

Infrastructure costs are the easy part to calculate. Everything else is where self-hosted gateways quietly get expensive.

A self-hosted gateway requires compute, but it also requires the engineering capacity to keep it production-ready. That means someone owns provider API compatibility when upstream changes ship, someone maintains the security posture when vulnerabilities are disclosed, and someone is on-call when routing breaks under load. In regulated environments, add compliance documentation, audit trails, and periodic security reviews on top of that.

The categories that rarely show up in initial estimates are engineering time, compliance overhead, observability pipelines, and scaling management. A mid-sized engineering team maintaining a self-hosted gateway can easily spend 20–40% of a senior engineer's annual capacity just keeping it operational, before writing a single line of product code.

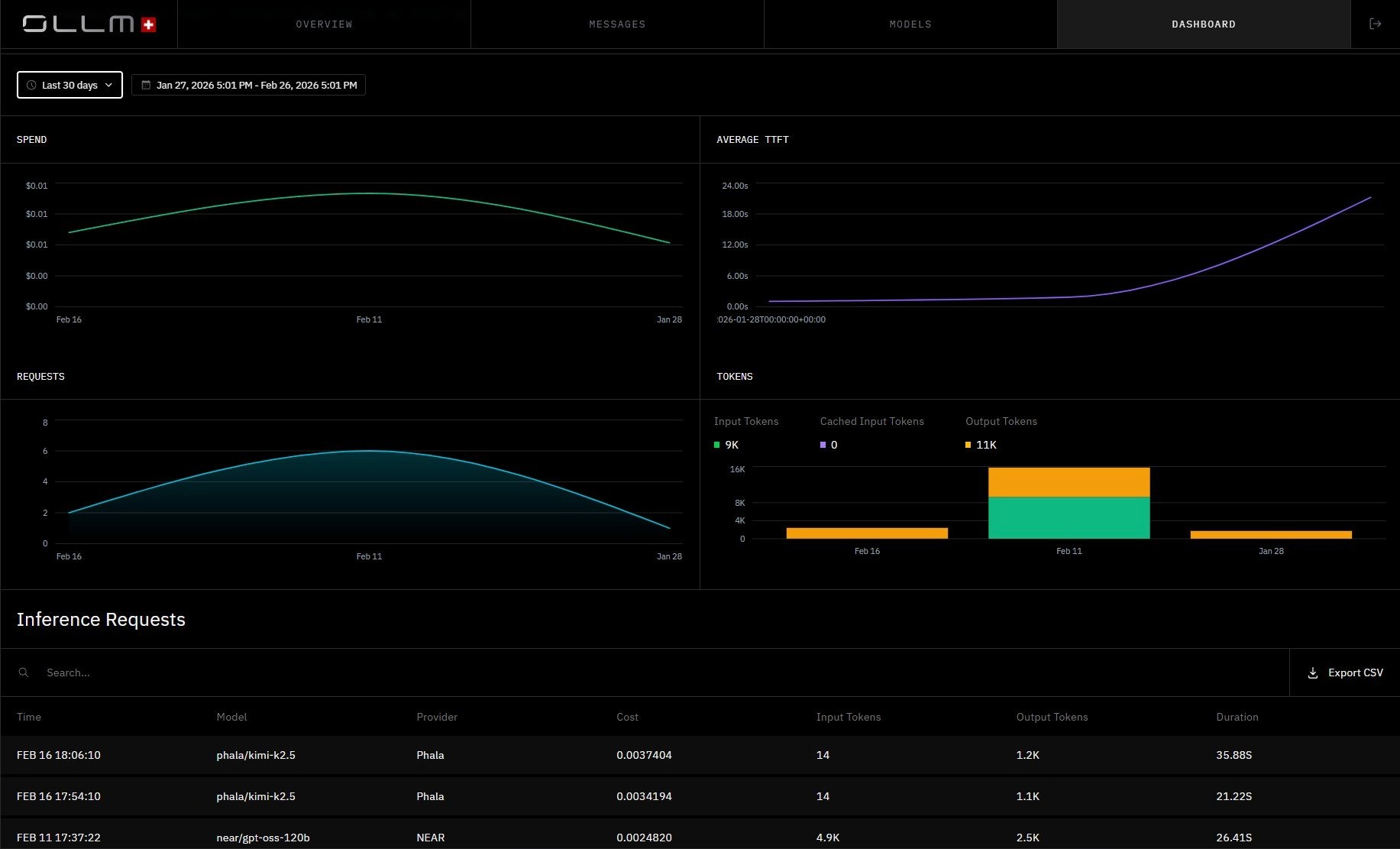

With OLLM, the operational layer is handled at the gateway level. Observability and cost tracking are centralized. Routing, failover, and rate-limit enforcement are built in. Scaling happens through centralized quota enforcement and load balancing without provisioning new infrastructure. For higher-volume needs, reserved capacity is available through sales rather than requiring a new infrastructure deployment.

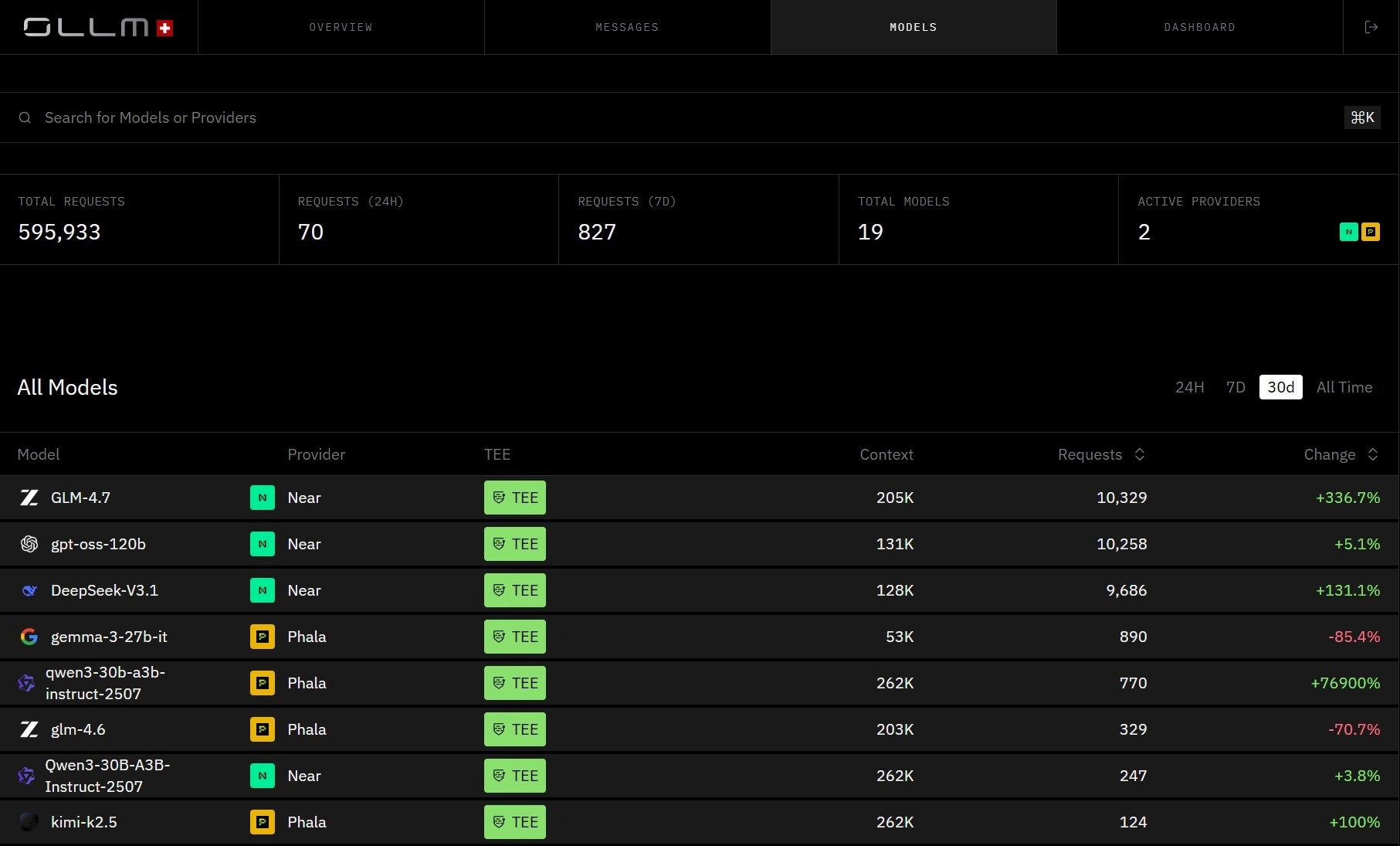

OLLM Dashboard, centralized spend tracking, request volume, average TTFT, and token usage across all providers in one view:

The computer bill is visible. The engineering burden is not, and that's what makes self-hosted TCO consistently underestimated.

Where Self-Hosted Gateways Fall Short on Security

Security is usually the primary argument for self-hosting. The assumption is that keeping infrastructure in-house ensures data safety. In practice, that assumption has some significant gaps.

The most common gap is the persistence of prompt and response. Many self-hosted gateway implementations log request and response content by default, for debugging, observability, or audit purposes. That creates a durable store of sensitive prompt data, making it a prime target for breaches. The question isn't just whether your gateway encrypts data in transit, but whether sensitive prompt-and-response content is being written to disk at all, and, if so, who can access it.

The second gap depends on your self-hosted architecture. If your gateway routes requests to third-party provider APIs, you control the gateway layer but have no visibility into what happens at the inference level on the provider's side, what hardware the model runs on, whether the execution environment is isolated, or whether prompt content is retained upstream. If you're running models directly on your own hardware, you control the full stack, and there are no co-tenants to worry about. But unless that hardware consists of confidential computing chips (such as H100s or H200s with the appropriate stack), you still have no cryptographic mechanism to prove execution integrity, you're relying on physical control of the environment, not verifiable evidence of what happened inside it.

The third gap is verifiability, and it applies in both cases. Even with full ownership of your infrastructure, there is no cryptographic way for an auditor, a compliance team, or a regulated client to independently verify that inference ran in an isolated environment, that prompt content wasn't persisted, or that the execution environment wasn't tampered with. Physical control and policy documentation are not the same as cryptographic proof, and in regulated environments, the difference increasingly matters.

These aren't edge cases. For teams operating under HIPAA, SOC 2, or the EU AI Act, these are exactly the questions that come up during security reviews.

How OLLM Handles Confidentiality at the Hardware Level

Most gateway-level security stops at the network layer, TLS in transit, access controls, and maybe encrypted storage. OLLM goes further by routing supported workloads to providers that run inference in hardware-isolated environments, pushing confidentiality controls down to the layer where inference actually happens.

TEE-backed execution is the core mechanism. Every model available through OLLM runs on confidential hardware; inference workloads execute inside hardware-isolated enclaves, separated from the host operating system and inaccessible to the underlying infrastructure.

OLLM Models view: every listed model shows its provider and TEE status. All models shown here run via TEE-backed provider paths (NEAR and Phala), where Intel TDX-based isolation and NVIDIA GPU attestation are available, where the stack supports it:

What makes this meaningful beyond marketing is attestation. TEE-backed execution can enable cryptographic attestation. Depending on the stack, technologies such as Intel TDX (for the confidential VM) and NVIDIA GPU attestation (for the GPU/device state) can generate evidence about the execution environment, including:

The identity and integrity of the confidential VM/workload measurement (per the attestation policy)

That the workload ran in a TEE-backed environment consistent with the policy

That the host can't directly read guest memory (within the TEE threat model)

Optionally, device/GPU claims when GPU attestation is available

Enterprises can validate attestation evidence as part of a trust policy, typically before provisioning secrets (and, in some designs, before sending sensitive prompts), and/or before accepting results. This model can replace purely trust-based isolation claims with cryptographically verifiable evidence about the execution environment, a fundamentally different standard than a vendor's compliance documentation.

On data retention, OLLM defaults to not storing prompt and response content at the gateway layer. To be precise about what that means:

Prompt/response content: not persisted by default; storage is opt-in via explicit configuration

Usage metadata: tokens, cost, timestamps: may still be logged for billing and reliability

Content logging: can be explicitly enabled when needed, but is off by default

This distinction matters because zero-retention isn't an absolute claim across all configurations; it's an enforceable default backed by policy and configuration guardrails, not just an app-level best practice.

Scaling Without the Operational Burden

Scaling an AI gateway sounds straightforward until you're actually doing it. With self-hosted infrastructure, scaling means capacity planning ahead of demand, provisioning new compute, managing failover configurations, and ensuring rate limits are enforced consistently across an expanding deployment. Every spike in usage is an infrastructure problem before it's a product problem.

OLLM handles scaling at the gateway layer. Rate-limit-aware routing, load balancing, and failover are built into how requests are executed, not something your team needs to configure and maintain separately. If a provider hits a rate limit or becomes unavailable, the gateway routes around it based on the execution policy, without requiring manual intervention.

For teams with predictable high-volume usage, the practical path is straightforward:

For immediate needs: load additional credits to increase capacity without any infrastructure changes

For sustained high-volume usage: contact sales to discuss reserving capacity, which ensures shared quota limits don't constrain availability during peak periods

The broader point is that scaling with OLLM is a procurement and configuration decision, not an engineering project. Teams don't need to size instances, pre-provision headroom, or build their own failover logic. That operational surface simply doesn't exist at the application layer; it's managed at the gateway level through configuration.

Making the Call: Is Self-Hosting Worth It?

Self-hosting trades operational burden for control, and that trade only makes sense if the control you gain is worth what you're giving up in engineering time, security depth, and verifiability. For teams in regulated environments, the security properties that actually matter, hardware-level isolation, cryptographic attestation evidence, and verifiable zero-retention, are difficult to replicate on self-hosted infrastructure, regardless of how well-configured it is.

To evaluate your true TCO, start by asking: what is the fully-loaded engineering cost of maintaining your gateway over 12 months; whether your current setup can provide cryptographic proof of execution integrity to auditors; and whether prompt and response payloads are being persisted anywhere in your logging pipeline. Those three questions alone quickly reframe the conversation.

Confidential AI infrastructure is increasingly expected in regulated environments, not optional. To see how OLLM's unified confidential gateway handles execution integrity, zero-retention defaults, and provider routing, explore the platform at ollm.com or reach out to discuss reserved capacity for your use case.

FAQs

1. What is the difference between TEE-based AI inference and standard encrypted inference?

Standard encrypted inference protects data in transit and at rest, but the data is decrypted during computation, meaning the host OS, hypervisor, or a compromised process can theoretically access it. TEE-based inference runs inside a hardware-isolated enclave (such as Intel TDX) where even the host cannot inspect the workload, and cryptographic attestation proofs verify that the environment wasn't tampered with before or during execution.

2. How does OLLM's zero-retention policy work in practice, and what data is still logged?

OLLM defaults to not persisting prompt and response content at the gateway layer. What is still logged by default is usage metadata, tokens consumed, cost, and timestamps, for billing and reliability purposes. Prompt and response content logging is opt-in via explicit configuration, meaning it won't appear in spend logs unless deliberately enabled.

3. What are the compliance implications of prompt data persistence in self-hosted AI gateways?

Under frameworks such as HIPAA and the EU AI Act, any durable storage of sensitive prompt data is a regulated data asset, subject to access controls, breach-notification requirements, and audit obligations. Self-hosted gateways that log request and response content by default can inadvertently create compliance liabilities that weren't accounted for in the original architecture decision.

4. How does Intel TDX attestation work in an AI inference pipeline?

Intel TDX (Trust Domain Extensions) creates hardware-isolated virtual machines called Trust Domains. During inference, an attestation report is generated that cryptographically captures the enclave's identity, configuration, and integrity state. This report can be validated as part of a trust policy, typically before provisioning secrets or sending sensitive prompts, and/or before accepting results by an auditor, compliance team, or automated verification workflow.

5. How does OLLM's single API simplify working with multiple LLM providers?

Rather than managing separate integrations, API keys, and data-handling agreements for each provider, OLLM gives you access to hundreds of models across multiple providers through a single OpenAI-compatible API. You explicitly select the model you want, OLLM authenticates the request, routes it to the correct provider's TEE-backed environment, and returns the response along with attestation artifacts. There is no automatic model substitution or background routing; model choice stays entirely in your control.