|

TL;DR

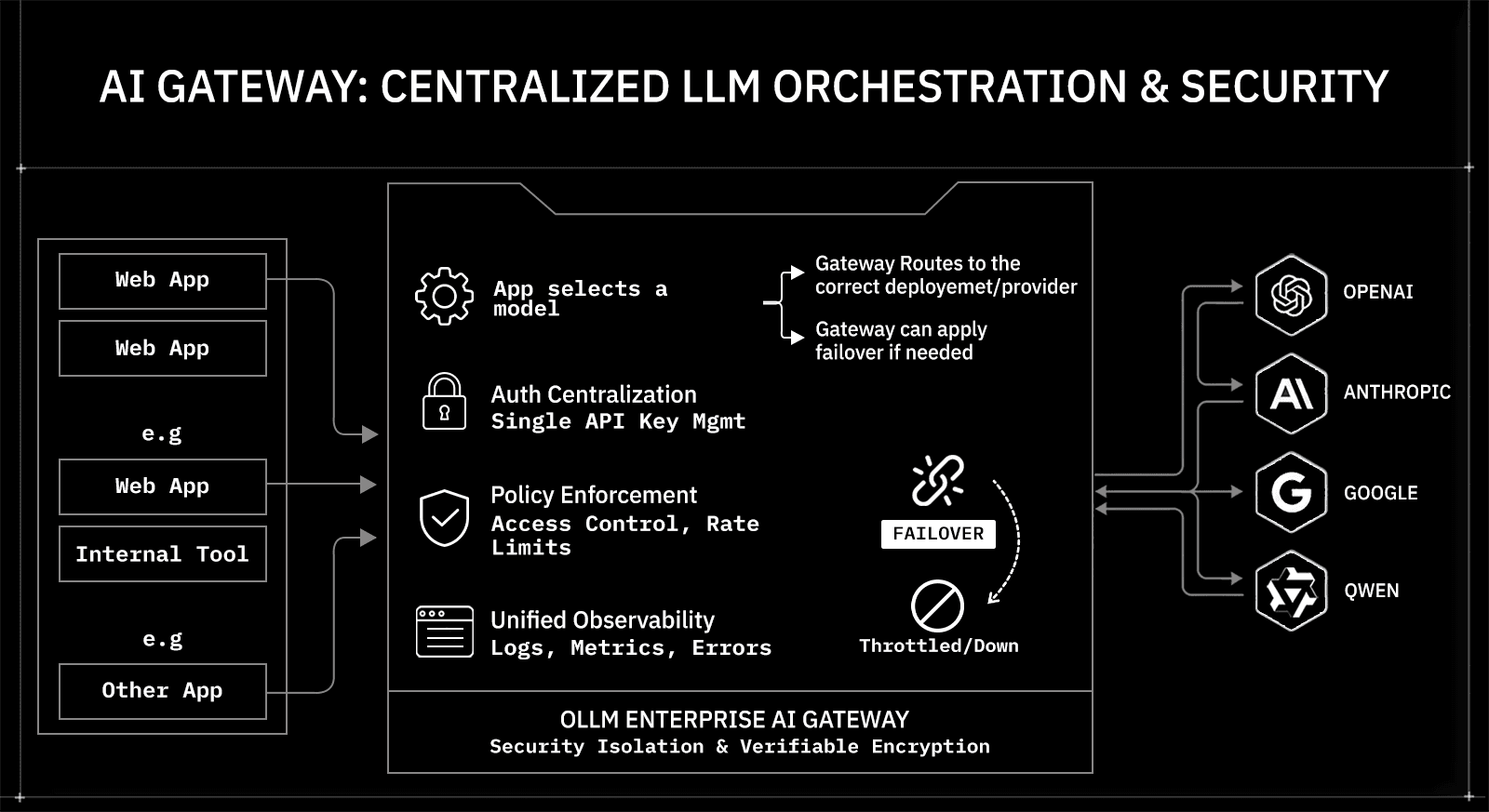

Direct model-specific integrations fragment architecture across providers, increasing code complexity, schema adapters, billing silos, compliance reviews, and the outage blast radius as LLM usage scales.

An AI gateway centralizes routing, authentication, rate limiting, observability, and cost tracking behind a single API, reducing integration sprawl while improving operational visibility and control across providers.

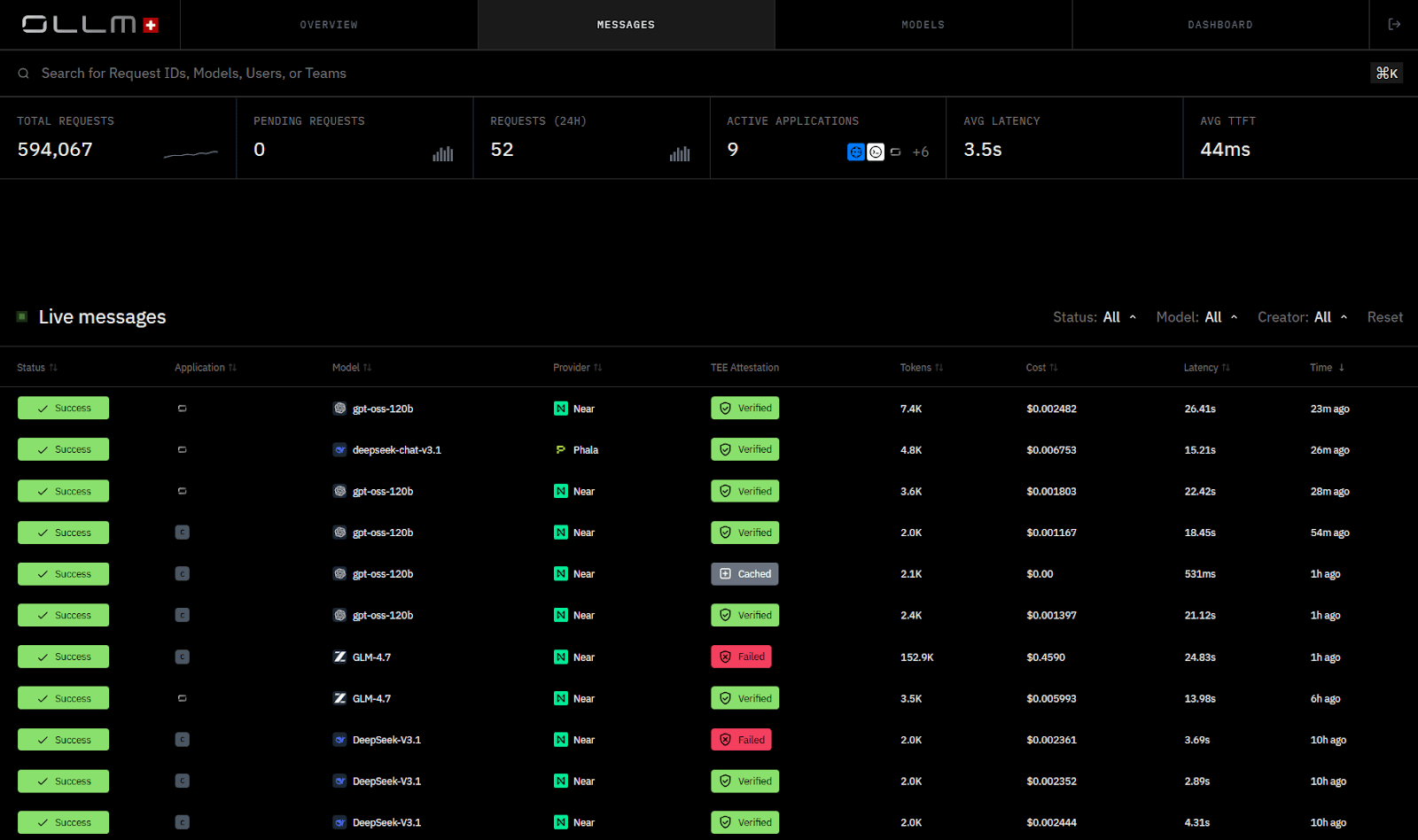

OLLM defaults to not persistently storing prompt or response content, minimizing historical inference exposure and reducing the scope of breach impact (metadata logging and prompt storage can be configured explicitly when required).

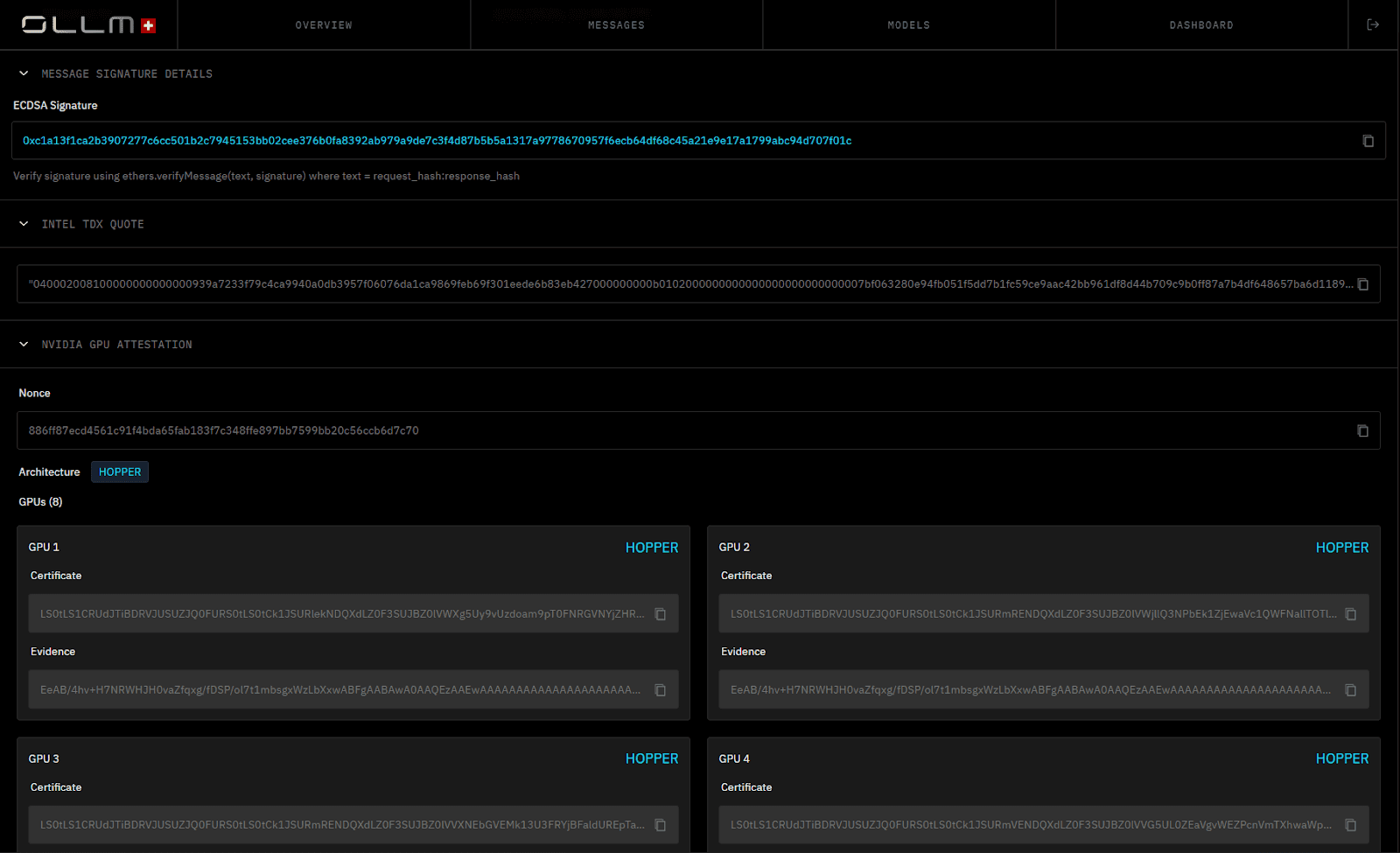

Hardware-backed Trusted Execution Environments with Intel TDX and NVIDIA GPU attestation provide cryptographic evidence of enclave and VM identity and integrity, strengthening runtime isolation guarantees beyond contractual assurances.

Scaling with OLLM occurs at the gateway layer through centralized quota enforcement, rate-limit-aware routing, load balancing, and multi-provider failover, reducing rate-limit friction and limiting provider outage blast radius without requiring application rewrites.

Artificial intelligence has moved from isolated experiments to production infrastructure. Enterprises now run customer support automation, internal copilots, data analysis pipelines, and agent workflows directly on large language models. At this stage, model access is no longer a prototype decision. It becomes an architectural decision.

Architecture determines how systems scale, how security is enforced, and how compliance is verified. Teams typically choose between direct, model-specific integrations or an AI gateway that aggregates and secures multiple providers behind a single API. Each approach carries tradeoffs in complexity, flexibility, and risk.

This comparison examines the trade-offs in detail, focusing on how OLLM approaches enterprise security, zero data retention, and verifiable runtime isolation.

What Is an AI Gateway and How Does It Work?

An AI gateway sits between enterprise applications and multiple large language model providers, like GPT-class APIs, Claude-class APIs, open-weight models, and domain-tuned private deployments. It provides a unified API layer that routes inference requests, enforces security controls, and manages interactions with providers through a single endpoint. Instead of wiring each application directly to a specific model vendor, services call the gateway, which handles all the requests.

In production systems, this architecture supports concrete use cases:

Customer support automation: Route high-volume chat queries to a cost-optimized model, while escalating sensitive billing disputes to a higher-accuracy model with stricter isolation controls.

Internal copilots for engineering or legal teams: Restrict certain departments to approved models and prevent prompt data from reaching non-compliant providers.

Document processing pipelines: Send summarization workloads to a fast inference model while routing contract analysis to a model configured for longer context windows.

AI agent workflows: Select model aliases in the application (or via policy-configured routing groups), while the gateway enforces execution policies, such as fallback, load balancing, and rate limiting.

High-availability SaaS APIs: Automatically reroute traffic if a provider experiences rate limiting, regional degradation, or API outages.

The gateway centralizes cross-provider concerns that would otherwise live inside application code. Credential management, routing policies, usage limits, observability, and failover logic operate within a single control layer rather than being replicated across services.

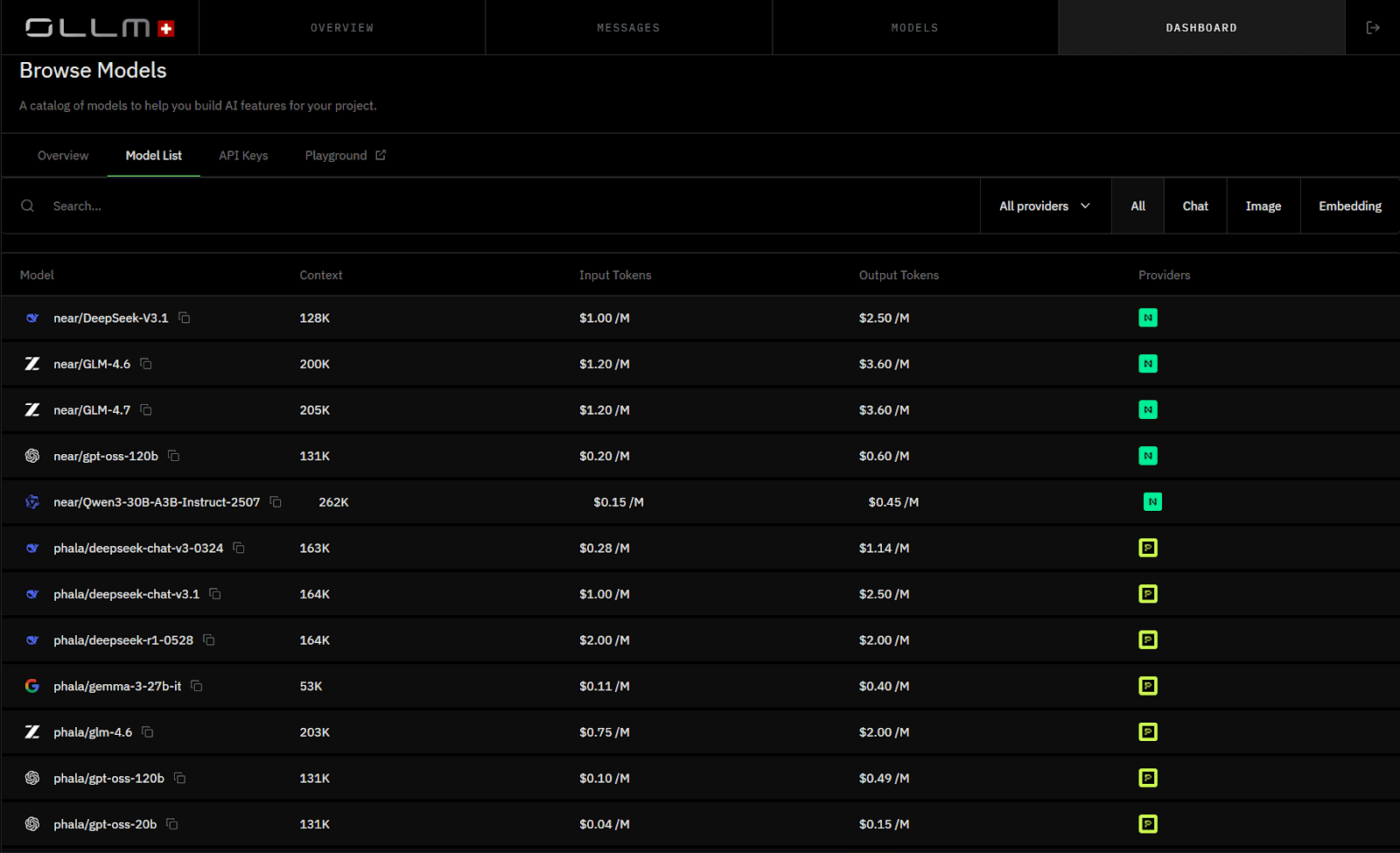

OLLM implements this pattern as an enterprise AI gateway with a strong emphasis on isolation and privacy. It aggregates high-security LLM providers behind a single API with privacy-preserving defaults and TEE-backed execution paths for supported providers and models. Retention and logging behavior are enforced through configuration controls.

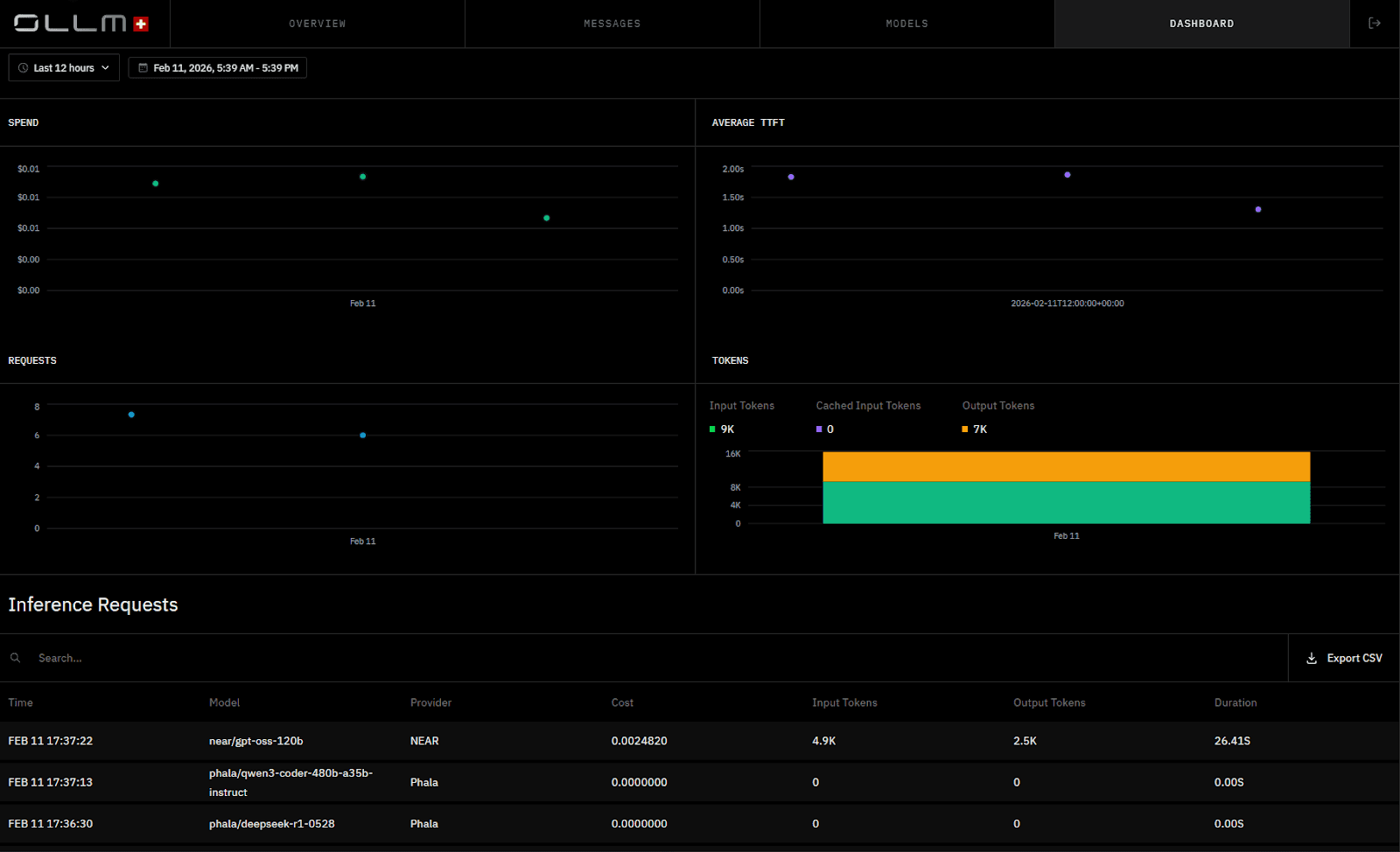

It routes requests to providers and model deployments that support confidential compute and attestation (for example, NEAR- or Phala-backed execution paths), while OLLM itself defaults to not persisting prompt or response content. Retention and logging behavior are governed through configuration controls.

How Model-Specific Integrations Work in Enterprise Production Environments

Direct model integrations connect a specific application to a specific LLM provider. For example, a customer support backend may call a GPT-class API for chat responses, a legal-tech platform may integrate directly with a Claude-class model for contract review, or a fintech analytics engine may use an open-weight deployment for transaction summarization. Engineers use the provider’s SDK or REST API, store credentials in backend services, and call the model endpoint from application logic.

A production system is a model that handles live user traffic, revenue-impacting workflows, or regulated data. Examples include:

A SaaS helpdesk responding to thousands of chats per hour

An HR platform screening resumes in real time

A healthcare application summarizing patient notes

A developer platform exposing AI capabilities through a public API

In these environments, the integration typically looks like this:

The application service calls Provider A’s API directly.

Authentication uses Provider A’s API keys or OAuth credentials.

Rate limits and quotas apply per provider account.

Logs, token usage, and latency metrics live in provider dashboards or custom monitoring systems.

When a second provider is introduced for cost optimization, latency improvements, or redundancy, the pattern is duplicated. Routing logic moves into application code. Engineering teams introduce conditionals such as if provider == X. Model switching requires configuration changes or refactoring. Failover logic must be built internally.

Most providers today expose OpenAI-style APIs, which makes initial integration straightforward. For example:

from openai import OpenAI |

The surface looks consistent. The complexity emerges when multiple providers are introduced behind similar interfaces.

API inconsistencies compound over time. Providers differ in streaming formats, function-calling schemas, token accounting rules, context window limits, and error structures. Engineering teams introduce adapters, normalization layers, and provider-specific retry logic to reconcile these differences.

Operational complexity extends beyond code. Billing becomes fragmented across vendors with separate invoices, pricing models, and usage dashboards. Cost spikes in one workload may not be visible when monitoring is siloed. Budget forecasting becomes harder as usage scales across providers.

Security and compliance reviews multiply as well. Each provider introduces different retention defaults, encryption guarantees, and isolation controls. Audit and vendor risk assessments expand with every additional integration.

The architecture works. However, as additional providers are added, operational responsibility spreads across engineering, finance, compliance, and reliability teams. Complexity grows not because the system breaks, but because control is distributed across multiple external dependencies.

Architectural Differences Between Direct Integrations and an AI Gateway

Architecture determines how complexity accumulates over time. The impact becomes visible when a single application begins using multiple models for different workloads.

Consider a SaaS platform that runs:

Customer support chat on one model

Resume scoring on another model

Contract summarization on a third model

With direct integrations, each workload embeds provider-specific logic inside its service. The support service implements streaming handling for Provider A. The HR service normalizes token limits for Provider B. The legal workflow implements custom retry logic for Provider C. Routing, credential management, fallback behavior, and rate-limit handling are implemented in separate codebases.

As additional providers are introduced for cost control or redundancy, the integration surface expands. Engineering teams maintain multiple SDKs, reconcile responses across different formats, and duplicate retry logic across services.

An AI gateway centralizes these responsibilities into a dedicated control plane. Customer support, resume scoring, and contract analysis services all send standardized requests to a single endpoint. The gateway executes policy for the selected model alias, resolving it to a configured deployment and applying load balancing, rate-limit-aware routing, and failover logic based on defined policies. The application code remains focused on business functionality rather than on provider coordination.

The architectural difference becomes clearer as workloads scale. Direct integrations distribute operational logic across services. A gateway consolidates it into one layer.

The comparison below highlights structural differences.

Category | Model-Specific Integrations | AI Gateway (OLLM) |

API Surface | Multiple SDKs and endpoints | Single unified API |

Vendor Lock-in | Migration requires refactoring and contract renegotiation | Provider switching is handled at the code level |

Model Switching | Code or config changes per provider | Only model specified changes in code/config |

Authentication | Separate credentials per vendor | Centralized credential management |

Rate Limits | Managed per provider account | Unified quota and policy control |

Failover | Implemented in the application logic | Built into the routing layer |

Observability | Provider dashboards + custom logs | Aggregated visibility across providers |

Operational overhead grows with each direct integration. A gateway reduces overhead by consolidating routing, authentication, and enforcement into a single layer. This consolidation does not remove provider dependencies, but it isolates them from application logic and reduces long-term maintenance complexity.

Operational consolidation is only one dimension of the difference. As LLM workloads begin processing regulated, confidential, or customer-sensitive data, architectural design directly affects security exposure. The next distinction moves from integration complexity to data risk: how inference data is handled during and after execution.

Zero Data Retention and Why It Changes the Risk Model

Data retention defines the blast radius of a security incident. If prompts and responses are stored, they become retrievable assets. If they are not stored, there is no historical dataset to extract.

OLLM operates with zero data retention. The gateway does not persist prompt or response data in logs, databases, or internal storage layers. After a request completes, no prompts or outputs remain stored in the system. There is no history of inferences and no retrievable conversation archive.

This approach differs from policies that state “we do not train on your data.” Training restrictions address model improvement. Retention policies address storage risk. Zero retention eliminates a data pool that could be queried, leaked, or subpoenaed.

For regulated environments, this changes the compliance posture:

No prompt database to secure.

No historical response logs to protect.

No internal data lake containing sensitive inference content.

Reduced the impact scope in breach scenarios.

Zero retention does not replace encryption or runtime isolation. It complements them. Encryption protects data in transit and at rest. Zero retention removes all exposure to long-term storage entirely.

Trusted Execution Environments and Cryptographic Attestation Explained

Runtime isolation determines who can access data during inference. Even if data is encrypted in transit and not retained after execution, it must exist in plaintext in memory while the model processes it. Trusted Execution Environments address that exposure window.

A Trusted Execution Environment (TEE) isolates code and data at the hardware level. The processor creates a protected enclave in which memory remains inaccessible to the host operating system, the hypervisor, and cloud administrators. Only verified code can execute inside that enclave.

OLLM incorporates hardware-backed TEEs and provides cryptographic attestation proofs of secure execution. These proofs verify that:

The workload ran inside a genuine TEE.

The enclave was not modified.

The expected code and configuration were loaded.

OLLM supports attestation mechanisms such as:

Intel TDX attestation, which validates secure virtual machine isolation at the CPU level.

NVIDIA GPU attestation, which verifies secure execution when GPU acceleration is involved.

Cryptographic attestation shifts compliance from contractual assurance to verifiable evidence. Instead of relying solely on vendor statements, teams can validate that inference occurred inside a hardware-isolated environment. This approach strengthens the audit posture and reduces reliance on trust assumptions at the infrastructure layer.

How Does an AI Gateway Reshape Infrastructure-Level Compliance?

Security architecture differs significantly between direct integrations and a hardened AI gateway. The difference is not only in encryption. It appears in retention policies, runtime isolation, and verifiability.

Direct integrations depend on each provider’s infrastructure controls. Some providers offer strong encryption and isolation. Others vary in retention defaults, logging practices, and hardware isolation guarantees. Compliance teams must review each provider independently.

An enterprise AI gateway, such as OLLM, standardizes these controls at a single enforcement layer. Zero data retention, hardware-backed isolation, and cryptographic attestation operate consistently across routed providers.

The comparison below highlights infrastructure-level differences.

Security Layer | Model-Specific Integrations | OLLM Gateway |

Data Retention | Provider-dependent policies | Zero data retention |

Prompt Storage | May log or retain by default | No persistent prompt storage |

Encryption | Typically in transit and at rest | Encryption at every layer |

Runtime Isolation | Varies by vendor | TEE-backed execution |

Attestation Proofs | Rarely exposed to customers | Cryptographic TEE attestation |

Compliance Verification | Contractual assurance | Verifiable hardware-backed proof |

Infrastructure standardization reduces audit complexity. Instead of validating controls across multiple vendors, organizations validate the gateway’s enforced posture. This reduces compliance surface area and creates a consistent security baseline across model providers.

How OLLM Scales Enterprise LLM Workloads Without Application Changes

Scaling LLM workloads requires both capacity flexibility and stable rate limits. Direct integrations scale per provider account. Each vendor enforces independent quotas, burst limits, and throttling rules. As usage grows, teams must either negotiate capacity separately or refactor the traffic distribution logic.

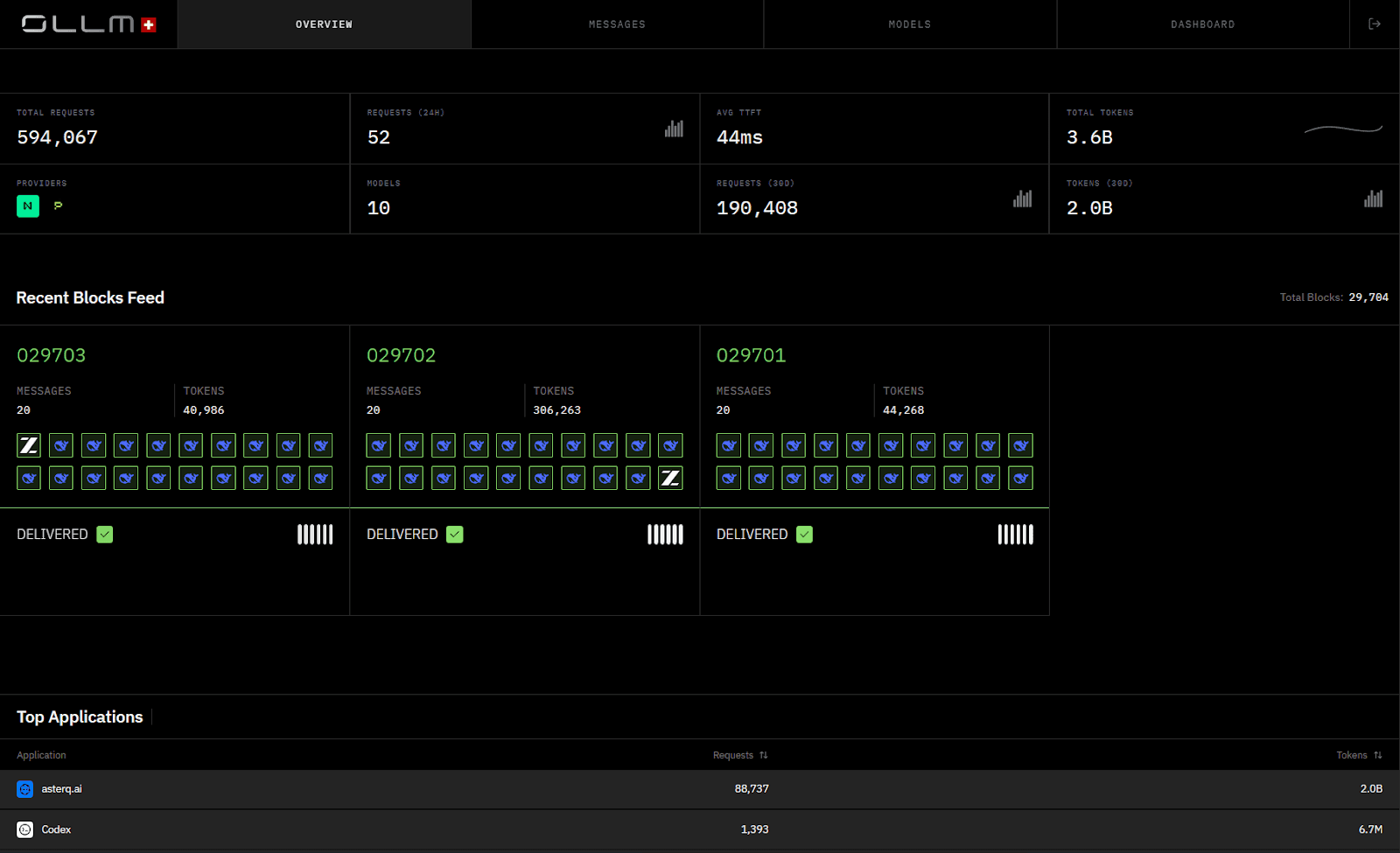

OLLM centralizes scaling through its gateway layer. Applications continue to call a single API, while capacity adjustments occur behind the abstraction layer. Scaling typically involves:

Loading additional credits to expand usage capacity.

Reserving dedicated capacity through sales agreements for predictable high-volume workloads.

Configuring routing rules to distribute traffic across multiple providers.

Leveraging multi-model failover to prevent downtime during provider throttling.

Centralized quota management simplifies forecasting. Instead of tracking limits across multiple vendor dashboards, usage and capacity are managed within a single control plane. If traffic approaches predefined thresholds, routing can shift to alternative providers without application-level rewrites.

Centralized billing simplifies financial oversight. Instead of reconciling multiple provider invoices and dashboards, usage and spend are tracked through a single gateway layer. This improves budget forecasting and reduces the risk of unnoticed cost spikes across distributed model integrations.

A single-provider integration increases the blast radius of outages. If that provider experiences downtime or regional degradation, application availability depends entirely on that vendor’s infrastructure. A gateway-based architecture can reroute traffic across alternative providers to reduce outage exposure and maintain service continuity without modifying application code.

This architecture reduces operational friction caused by rate limit spikes and sudden demand increases. Scaling becomes a capacity configuration decision rather than a code change.

Engineering Overhead and Maintenance Costs in Multi-Provider LLM Integrations

Engineering overhead increases as the number of SDK integrations grows, with one for each model provider. Each model-specific integration introduces new SDK dependencies, authentication flows, logging pipelines, and rate-limit handling logic. Over time, these responsibilities expand beyond the initial implementation effort.

Direct integrations typically require:

Maintaining multiple SDK versions.

Updating provider-specific API changes.

Revalidating compliance posture per vendor.

Correlating request logs across multiple provider dashboards.

Tracing failed requests when routing logic spans vendors.

Aggregating audit trails during security investigations.

Implementing custom failover and retry logic.

Managing separate monitoring and alerting rules.

When incidents occur, distributed logging across providers complicates root cause analysis and slows response times.

This fragmentation also increases maintenance costs. Security reviews must examine each provider independently. Incident response must determine whether issues originate in application logic or a specific vendor integration.

An AI gateway shifts these responsibilities into a centralized layer. Application services interact with one API. Provider changes remain isolated within the gateway. Security controls and routing policies remain consistent across providers.

Long-term, the tradeoff becomes clear. Direct integrations may appear simpler initially. At scale, centralized abstraction reduces repetitive integration work, limits compliance surface area, and isolates provider-specific changes from business logic.

Choosing the Right Architecture for Enterprise AI Deployments

The choice of architecture depends on workload sensitivity, scale, and operational constraints. Direct integrations can work well for single-provider use cases with low regulatory exposure. A small team integrating a single model into a contained application may not require additional abstraction layers.

Direct integrations are typically suitable when:

Only one provider is used.

Data sensitivity is low.

Compliance requirements are minimal.

Model switching is unlikely.

Scaling needs are predictable and modest.

An AI gateway becomes more relevant as complexity increases. Multi-provider strategies, regulated data handling, and enterprise audit requirements introduce coordination overhead that benefits from centralization.

An AI gateway such as OLLM is more appropriate when:

Multiple LLM providers are required.

Sensitive or regulated data is processed.

Zero data retention is a requirement.

Verifiable runtime isolation is needed.

Capacity must scale without application rewrites.

The distinction is architectural rather than promotional. Direct integrations prioritize simplicity at a small scale. As deployments expand, an enterprise AI gateway prioritizes centralized control, verifiable security, and operational consistency.

Conclusion: Architectural Control Determines Long-Term Security and Flexibility

Architectural choices shape how AI systems behave at scale, under regulatory pressure, and under operational pressure. Direct model integrations provide speed for narrow use cases, but they distribute security, routing, and compliance responsibilities across multiple vendors. An AI gateway centralizes those responsibilities into a dedicated control plane. OLLM extends that model further by supporting zero data retention, hardware-backed Trusted Execution Environments, Intel TDX, NVIDIA GPU attestation, and cryptographic proof of secure execution. The difference is not only consolidation. The difference is verifiable isolation and reduced data exposure.

Teams evaluating LLM infrastructure should assess more than latency and cost. Evaluate data retention posture, runtime isolation guarantees, attestation capabilities, and scaling mechanics. If enterprise workloads require verifiable privacy, centralized policy enforcement, and flexible multi-provider routing, an AI gateway approach warrants serious consideration. Reviewing your current integration surface and compliance assumptions is the logical next step before further production usage expands.

FAQ

1. What is the difference between an AI gateway and direct LLM API integration?

An AI gateway abstracts multiple large language model providers behind a single API and centralized control plane. Direct LLM API integration connects an application to one provider’s SDK or endpoint. The key differences appear in routing flexibility, rate limit management, security enforcement, observability, and vendor lock-in. An AI gateway reduces integration sprawl and centralizes policy enforcement across providers.

2. How does zero data retention improve LLM security and compliance posture?

Zero data retention eliminates persistent storage of prompts and responses after inference completes. Without stored prompt logs or response databases, there is no retrievable historical dataset to exfiltrate or subpoena. This materially reduces the scope of breach impact and simplifies compliance audits in regulated environments such as healthcare, finance, and government deployments.

3. What are Trusted Execution Environments (TEE) and how do cryptographic attestation proofs work?

A Trusted Execution Environment isolates workloads at the hardware level using secure enclaves. During inference, the model runs in protected memory, inaccessible to the host operating system or cloud administrators. Cryptographic attestation generates verifiable proofs that confirm the enclave’s integrity, code identity, and configuration. These proofs enable enterprises to validate runtime isolation without relying solely on vendor assurances.

4. How does OLLM implement Intel TDX and NVIDIA GPU attestation for secure AI inference?

OLLM leverages hardware-backed isolation mechanisms such as Intel TDX for secure virtual machine isolation and NVIDIA GPU attestation for protected accelerator execution. The platform provides cryptographic attestation proofs that verify inference workloads executed inside genuine, untampered TEEs. This enables verifiable privacy guarantees across CPU and GPU-based LLM workloads.

5. How does OLLM scale LLM workloads without hitting provider rate limits?

OLLM centralizes quota management and routing across aggregated LLM providers. Scaling can be handled by loading additional credits, reserving dedicated capacity, or distributing traffic across multiple models through routing policies. Multi-provider failover reduces dependency on a single vendor’s rate limits, enabling consistent throughput without requiring application-level refactoring.